SpecForge User Guide

SpecForge is an AI-powered formal specification authoring tool based on Lilo, a domain specific language designed for specifying temporal systems.

This guide covers installation, the Lilo specification language, the Python SDK, and using Lilo with VSCode.

To get started, see setting up, releases are available from the releases page. A few example projects can be found in the examples repository or be downloaded from the releases page.

Other versions of this guide:

- In PDF format.

- In Japanese. (このガイドには日本語版もあります.)

You are reading the docs for version 0.5.8 of SpecForge. Navigate to a different URL to view docs for a different version.

Setting up SpecForge

The SpecForge suite consists of a few components:

- The SpecForge Server which is the backend server which the other components connect to. It can be run via Docker or as an executable.

- The SpecForge VSCode Extension which provides Lilo Language support in VSCode for editing and managing specifications, as well as rendering interactive visualizations.

- The SpecForge Python SDK which provides an API for interacting with the SpecForge server from Python code. This can be used to communicate and exchange specifications or data with the SpecForge server from Python scripts or Jupyter notebooks.

All necessary files can be obtained from the SpecForge releases page.

Quick Start

Follow these steps to get started quickly:

- Install dependencies

z3andrsvg-converter(see OS-specific instructions;rsvg-converteris optional) - Download and extract the SpecForge executable for your operating system

- Configure your license (place

license.jsonin the appropriate location for your OS) - (Optional) Configure LLM provider by setting environment variables (e.g.,

SPECFORGE_LLM_PROVIDER=openai,OPENAI_API_KEY=...) - see LLM Provider Configuration - Start the SpecForge server:

./specforge serve(or.\specforge.exe serveon Windows) - Install the VSCode Extension (see docs)

- Create a directory for your project and place your

.lilofiles directly in it - Open the directory in VSCode and start writing specifications

Note: The lilo.toml project configuration file is optional. For initial setup, you can skip it and place your specification and data files directly in the project root. See Project Configuration for details on when and how to use lilo.toml.

Detailed Setup Instructions

Choose your platform for detailed setup instructions:

- Windows - Complete setup guide for Windows

- macOS - Complete setup guide for macOS (Apple Silicon)

- Linux - Complete setup guide for Linux

- Docker - Using Docker instead of the executable

Server Environment Variables

The SpecForge server reads the following environment variables at startup. These can be set before running specforge serve or configured in your Docker Compose file.

| Variable | Type | Default | Description |

|---|---|---|---|

SPECFORGE_PORT | Integer | 8080 | Port the server listens on. |

SPECFORGE_CACHE_BOUND | Integer | 64 | Maximum number of entries in the exemplification cache. The cache stores results from the SMT solver (Z3) to avoid redundant computations. Set to 0 to disable caching. |

SPECFORGE_SEMAPHORE_LIMIT | Integer | 8 | Maximum number of concurrent SMT solver (Z3) instances. Limits parallelism to prevent resource exhaustion. Set to 0 to disable the semaphore (no concurrency limit). |

Example usage:

SPECFORGE_PORT=9090 SPECFORGE_CACHE_BOUND=128 ./specforge serve

For LLM-related environment variables (SPECFORGE_LLM_PROVIDER, SPECFORGE_LLM_MODEL, OPENAI_API_KEY, etc.), see the LLM Provider Configuration guide.

Note that all of these can also be configured in the settings of the SpecForge VSCode extension.

Setting up SpecForge on Windows

This guide will walk you through setting up SpecForge on Windows.

1. Installation

MSI Installer (Recommended)

Download specforge-x.y.z-Windows-X64-en-US.msi from the SpecForge releases page and run the installer.

The MSI installer will:

- Install SpecForge to

C:\Program Files\Imiron\SpecForge\ - Add SpecForge to your system PATH automatically

- Include all required dependencies (Z3, rsvg-convert)

Standalone Executable

Download specforge-x.y.z-Windows-X64.zip from the SpecForge releases page and extract it to a directory of your choice.

Note: With this method, you need to manually install dependencies.

The SpecForge executable requires Z3 and rsvg-converter (optional) to be installed on your system.

Using Chocolatey (Recommended)

Open PowerShell as Administrator and run:

choco install z3 rsvg-convert

If you don’t have Chocolatey, you can install it from chocolatey.org.

Manual Installation

If you prefer not to use a package manager, download Z3 directly from the Z3 releases page and add it to your PATH.

2. Configure Your License

The SpecForge server requires a valid license file to start. If you don’t have a license, please contact the SpecForge team or request a trial license.

Place your license.json file in one of the following locations:

-

Standard Configuration Directory (recommended):

%APPDATA%\specforge\license.json- Typically:

C:\Users\YourUsername\AppData\Roaming\specforge\license.json

-

Environment Variable (for custom locations):

$env:SPECFORGE_LICENSE_FILE="C:\path\to\license.json" -

Current Directory:

.\license.json

Create the directory if it doesn’t exist. You can do this in PowerShell:

New-Item -ItemType Directory -Force -Path "$env:APPDATA\specforge"

Copy-Item "C:\path\to\your\license.json" "$env:APPDATA\specforge\license.json"

3. Configure LLM Provider (Optional)

To use LLM-based features such as natural-language spec generation and error explanation, configure an LLM provider by setting environment variables before starting the server.

For OpenAI (recommended):

$env:SPECFORGE_LLM_PROVIDER="openai"

$env:SPECFORGE_LLM_MODEL="gpt-5-nano-2025-08-07"

$env:OPENAI_API_KEY="your-api-key-here"

Get an API key from platform.openai.com/api-keys.

For other providers (Gemini, Anthropic, Ollama), see the LLM Provider Configuration guide.

4. Start the Server

If you used the MSI installer, run from any directory:

specforge serve

If you used the standalone executable, navigate to the directory where you extracted the SpecForge executable and run:

.\specforge.exe serve

The server will start on http://localhost:8080. You can verify it’s running by navigating to http://localhost:8080/health, which should show version information.

Note: The server will exit immediately if the license is missing or invalid. If you encounter startup issues, verify your license configuration.

5. Install the VSCode Extension

Install the SpecForge VSCode extension from the Visual Studio Marketplace or see the VSCode Extension setup guide.

6. Install the Python SDK (Optional)

The Python SDK enables interaction with the SpecForge server programmatically from Python. This can be used to embed SpecForge analyses in Python notebooks and directly feed and retrieve data using Pandas Dataframes. See the Python SDK setup guide for instructions on how to set it up.

Further Reading

- VSCode Extension - Learn about the VSCode extension features

- Python SDK - Set up the Python SDK for programmatic access

- A Whirlwind Tour - Take a tour of SpecForge capabilities

- Project Configuration - Learn about

lilo.tomlconfiguration

Setting up SpecForge on macOS

This guide will walk you through setting up SpecForge on macOS using the standalone executable.

1. Download the Executable

Download specforge-x.y.z-macOS-ARM64.tar.bz2 from the SpecForge releases page and extract it to a directory of your choice.

2. Install Dependencies

The SpecForge executable requires Z3 to be installed. rsvg-converter is optional but recommended for SVG-to-PNG conversion used by the animation feature.

Install using Homebrew:

brew install z3 librsvg

If you don’t have Homebrew, install it from brew.sh.

Note:

librsvgprovides thersvg-convertcommand. Without it, theanimate()feature in the Python SDK will not be able to render PNG frames from SVG visualizations. All other SpecForge features work without it.

3. Configure Your License

The SpecForge server requires a valid license file to start. If you don’t have a license, please contact the SpecForge team or request a trial license.

Place your license.json file in one of the following locations:

-

Standard Configuration Directory (recommended):

~/.config/specforge/license.json

-

Environment Variable (for custom locations):

export SPECFORGE_LICENSE_FILE=/path/to/license.json -

Current Directory:

./license.json

Create the directory if it doesn’t exist:

mkdir -p ~/.config/specforge

cp /path/to/your/license.json ~/.config/specforge/

4. Configure LLM Provider (Optional)

To use LLM-based features such as natural-language spec generation and error explanation, configure an LLM provider by setting environment variables before starting the server.

For OpenAI (recommended):

export SPECFORGE_LLM_PROVIDER=openai

export SPECFORGE_LLM_MODEL=gpt-5-nano-2025-08-07

export OPENAI_API_KEY=your-api-key-here

Get an API key from platform.openai.com/api-keys.

For other providers (Gemini, Anthropic, Ollama), see the LLM Provider Configuration guide.

5. Start the Server

Navigate to the directory where you extracted the SpecForge executable and run:

./specforge serve

The server will start on http://localhost:8080. You can verify it’s running by navigating to http://localhost:8080/health, which should show version information.

Note: The server will exit immediately if the license is missing or invalid. If you encounter startup issues, verify your license configuration.

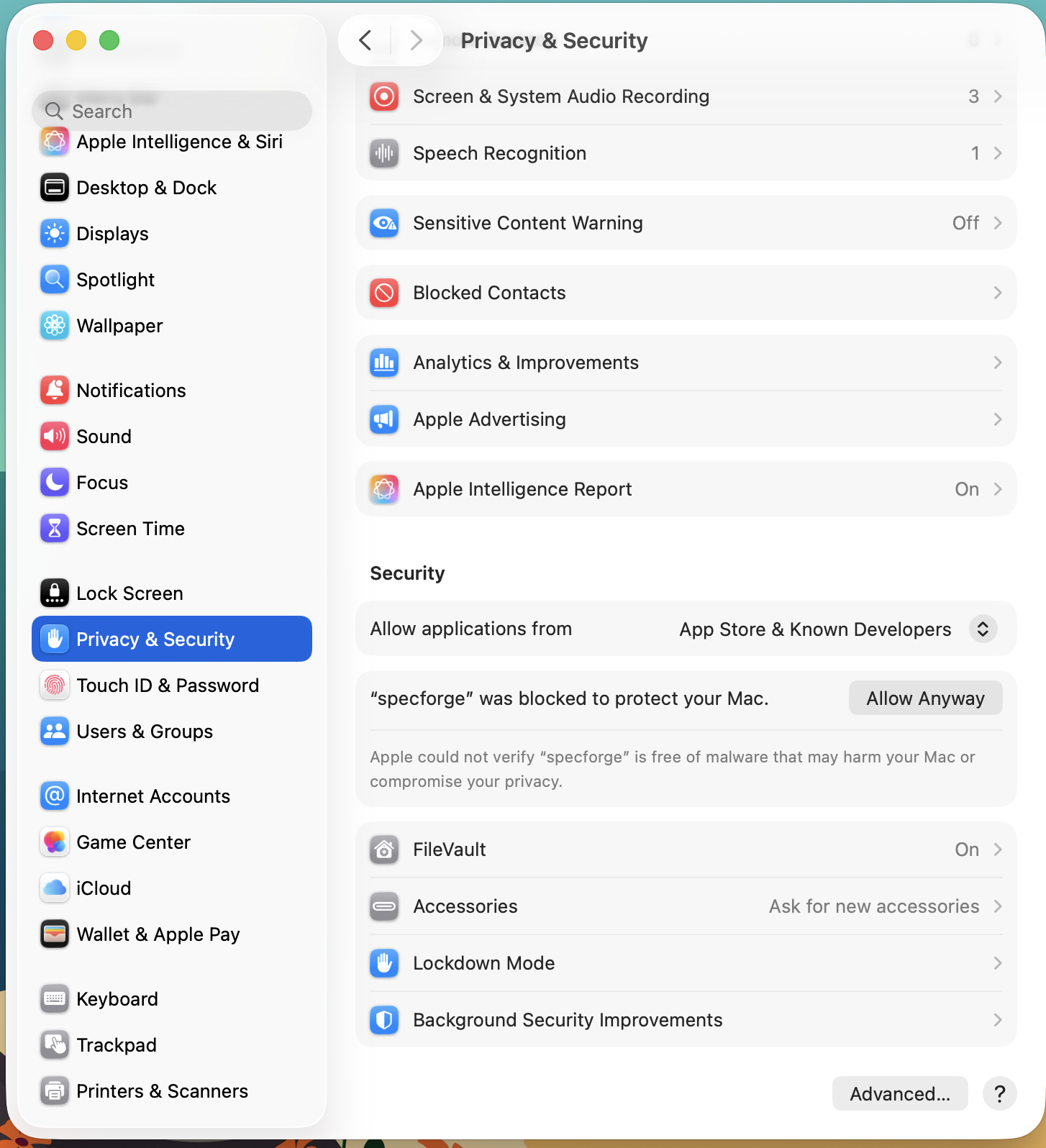

Allowing Execution of the Downloaded SpecForge Binary

The MacOS Gatekeeper may display an alert preventing you from executing the downloaded binary, because it was downloaded from a third-party source.

To whitelist the specforge executable, run the following command.

xattr -d com.apple.quarantine path/to/specforge

Alternatively, you can do so from the System Settings GUI by following these steps.

- Open System Settings, and go to ‘Privacy & Security’

- In the security section, you should see ‘“specforge” was blocked to protect your Mac.’

- Click ‘Open Anyway’.

6. Install the VSCode Extension

Install the SpecForge VSCode extension from the Visual Studio Marketplace or see the VSCode Extension setup guide.

7. Install the Python SDK (Optional)

The Python SDK enables interaction with the SpecForge server programmatically from Python. This can be used to embed SpecForge analyses in Python notebooks and directly feed and retrieve data using Pandas Dataframes. See the Python SDK setup guide for instructions on how to set it up.

Further Reading

- VSCode Extension - Learn about the VSCode extension features

- Python SDK - Set up the Python SDK for programmatic access

- A Whirlwind Tour - Take a tour of SpecForge capabilities

- Project Configuration - Learn about

lilo.tomlconfiguration

Setting up SpecForge on Linux

This guide will walk you through setting up SpecForge on Linux using the standalone executable.

1. Download the Executable

Download specforge-x.y.z-Linux-X64.tar.bz2 from the SpecForge releases page and extract it to a directory of your choice.

2. Install Dependencies

The SpecForge executable requires Z3 to be installed. rsvg-converter is optional but recommended for SVG-to-PNG conversion used by the animation feature.

Use your distribution’s package manager:

Ubuntu/Debian:

sudo apt install z3 librsvg2-bin

Fedora/RHEL:

sudo dnf install z3 librsvg2-tools

Arch Linux:

sudo pacman -S z3 librsvg

Note: The

librsvg2-bin/librsvg2-tools/librsvgpackage provides thersvg-convertcommand. Without it, theanimate()feature in the Python SDK will not be able to render PNG frames from SVG visualizations. All other SpecForge features work without it.

3. Configure Your License

The SpecForge server requires a valid license file to start. If you don’t have a license, please contact the SpecForge team or request a trial license.

Place your license.json file in one of the following locations:

-

Standard Configuration Directory (recommended):

~/.config/specforge/license.json

-

Environment Variable (for custom locations):

export SPECFORGE_LICENSE_FILE=/path/to/license.json -

Current Directory:

./license.json

Create the directory if it doesn’t exist:

mkdir -p ~/.config/specforge

cp /path/to/your/license.json ~/.config/specforge/

4. Configure LLM Provider (Optional)

To use LLM-based features such as natural-language spec generation and error explanation, configure an LLM provider by setting environment variables before starting the server.

For OpenAI (recommended):

export SPECFORGE_LLM_PROVIDER=openai

export SPECFORGE_LLM_MODEL=gpt-5-nano-2025-08-07

export OPENAI_API_KEY=your-api-key-here

Get an API key from platform.openai.com/api-keys.

For other providers (Gemini, Anthropic, Ollama), see the LLM Provider Configuration guide.

5. Start the Server

Navigate to the directory where you extracted the SpecForge executable and run:

./specforge serve

The server will start on http://localhost:8080. You can verify it’s running by navigating to http://localhost:8080/health, which should show version information.

Note: The server will exit immediately if the license is missing or invalid. If you encounter startup issues, verify your license configuration.

6. Install the VSCode Extension

Install the SpecForge VSCode extension from the Visual Studio Marketplace or see the VSCode Extension setup guide.

7. Install the Python SDK (Optional)

The Python SDK enables interaction with the SpecForge server programmatically from Python. This can be used to embed SpecForge analyses in Python notebooks and directly feed and retrieve data using Pandas Dataframes. See the Python SDK setup guide for instructions on how to set it up.

Further Reading

- VSCode Extension - Learn about the VSCode extension features

- Python SDK - Set up the Python SDK for programmatic access

- A Whirlwind Tour - Take a tour of SpecForge capabilities

- Project Configuration - Learn about

lilo.tomlconfiguration

Setting up SpecForge with Docker

This guide will walk you through setting up SpecForge using Docker instead of the standalone executable.

Prerequisites

Before starting the server, you must obtain and configure a valid license. If you don’t have a license, please contact the SpecForge team or request a trial license.

1. Obtain the Docker Compose File

The SpecForge Server is distributed as a Docker Image via GHCR (GitHub Container Registry). The recommended way to run the Docker Image is through Docker Compose.

Download the latest docker-compose-x.y.z.yml file from the SpecForge releases page.

2. Configure Your License

The SpecForge server requires a valid license file. You need to make the license file available to the Docker container.

-

Place your

license.jsonfile in a new directory. Using/home/user/.config/specforge/is a common practice. -

Modify the following lines in your

docker-compose-x.y.z.ymlfile to point to your license file:- type: bind source: path/to/.config/specforge/ # place your license.json file here on the host machine target: /app/specforgeconfig/ # config directory inside the container (do not modify this) read_only: trueNote: It is not recommended to run docker as

root(i.e. withsudo). But if you do, note that paths with~/would be understood by the system as/root/, not your home directory. So it’s best to use absolute paths (without~).

3. Configure LLM Provider (Optional)

To use LLM-based features such as natural-language spec generation and error explanation, configure an LLM provider by modifying environment variables in your docker-compose.yml file before starting the server.

For OpenAI (recommended):

- SPECFORGE_LLM_PROVIDER=openai

- SPECFORGE_LLM_MODEL=gpt-5-nano-2025-08-07

- OPENAI_API_KEY=${OPENAI_API_KEY}

Get an API key from platform.openai.com/api-keys.

You can insert API keys directly in the file, but using environment variables is better for security.

For other providers (Gemini, Anthropic, Ollama) and detailed configuration options, see the LLM Provider Configuration guide.

4. Start the Server

Run the following command, replacing /path/to/docker-compose-x.y.z.yml with the actual path to your downloaded file:

docker compose -f /path/to/docker-compose-x.y.z.yml up --abort-on-container-exit

The flag

--abort-on-container-exitis recommended so that the container fails fast on startup errors.

You can verify that the server is up by navigating to http://localhost:8080/health, which should show version information.

Note: The server will exit immediately if the license is missing or invalid. If you encounter startup issues, verify your license configuration.

5. Install the VSCode Extension

Install the SpecForge VSCode extension from the Visual Studio Marketplace or see the VSCode Extension setup guide.

Updating the Docker Image

From time to time, new versions of the SpecForge Server are released. To use the latest version, you can either:

-

Use the updated docker-compose file from the releases page, or

-

Set the image field to latest in your Docker Compose file:

image: ghcr.io/imiron-io/specforge/specforge-backend:latestThen pull the latest image:

docker compose -f /path/to/docker-compose.yml pull

Next Steps

- VSCode Extension - Learn about the VSCode extension features

- Python SDK - Set up the Python SDK for programmatic access

- A Whirlwind Tour - Take a tour of SpecForge capabilities

- Project Configuration - Learn about

lilo.tomlconfiguration

LLM Provider Configuration

SpecForge includes LLM-based features such as natural-language based spec generation and error explanation. To use these features, you need to configure an LLM provider.

Supported Providers

SpecForge currently supports three LLM providers:

- OpenAI - Cloud-based API (recommended for most users)

- Gemini - Google’s cloud-based API

- Ollama - Run models locally on your machine

Configuration Methods

For Executable (Windows, macOS, Linux)

Set the following environment variables before starting the SpecForge server:

OpenAI

# Linux / macOS

export SPECFORGE_LLM_PROVIDER=openai

export SPECFORGE_LLM_MODEL=gpt-5-nano-2025-08-07

export OPENAI_API_KEY=your-api-key-here

# Windows PowerShell

$env:SPECFORGE_LLM_PROVIDER="openai"

$env:SPECFORGE_LLM_MODEL="gpt-5-nano-2025-08-07"

$env:OPENAI_API_KEY="your-api-key-here"

Get an API key from platform.openai.com/api-keys.

Gemini

# Linux / macOS

export SPECFORGE_LLM_PROVIDER=gemini

export SPECFORGE_LLM_MODEL=gemini-2.5-flash

export GEMINI_API_KEY=your-api-key-here

# Windows PowerShell

$env:SPECFORGE_LLM_PROVIDER="gemini"

$env:SPECFORGE_LLM_MODEL="gemini-2.5-flash"

$env:GEMINI_API_KEY="your-api-key-here"

Get an API key from ai.google.dev/gemini-api/docs/api-key.

Anthropic

# Linux / macOS

export SPECFORGE_LLM_PROVIDER=anthropic

export SPECFORGE_LLM_MODEL=claude-haiku-4-5

export ANTHROPIC_API_KEY=your-api-key-here

# Windows PowerShell

$env:SPECFORGE_LLM_PROVIDER="anthropic"

$env:SPECFORGE_LLM_MODEL="claude-haiku-4-5"

$env:ANTHROPIC_API_KEY="your-api-key-here"

Get an API key from platform.claude.com/docs/en/api/admin/api_keys/retrieve.

Ollama

First, install and run Ollama from docs.ollama.com/quickstart.

Then set the environment variables:

# Linux / macOS

export SPECFORGE_LLM_PROVIDER=ollama

export SPECFORGE_LLM_MODEL=your-model-name # e.g., llama3.2, mistral

export OLLAMA_API_BASE=http://127.0.0.1:11434

# Windows PowerShell

$env:SPECFORGE_LLM_PROVIDER="ollama"

$env:SPECFORGE_LLM_MODEL="your-model-name" # e.g., llama3.2, mistral

$env:OLLAMA_API_BASE="http://127.0.0.1:11434"

Change OLLAMA_API_BASE if your Ollama server is running on a different machine.

For Docker

Modify the environment variables in your docker-compose.yml file:

- SPECFORGE_LLM_PROVIDER=openai # other options: ollama, gemini

- SPECFORGE_LLM_MODEL=gpt-5-nano-2025-08-07 # choose the appropriate model for your provider

# One of the following, depending on SPECFORGE_LLM_PROVIDER:

- OPENAI_API_KEY=${OPENAI_API_KEY}

- GEMINI_API_KEY=${GEMINI_API_KEY}

- OLLAMA_API_BASE=http://127.0.0.1:11434 # change if your ollama server is running remotely

You can insert API keys directly in the file:

- SPECFORGE_LLM_PROVIDER=gemini

- GEMINI_API_KEY=abc123XYZ # no string quotes

However, it is better to use environment variables for security.

Default Models

If you don’t set the SPECFORGE_LLM_MODEL variable:

- OpenAI: Defaults to

gpt-5-nano-2025-08-07 - Gemini: Defaults to

gemini-2.5-flash - Anthropic: Defaults to

claude-haiku-4-5 - Ollama: You must specify a model (no default)

Without LLM Configuration

Without an appropriate LLM provider configuration, LLM-based SpecForge features will be unavailable. The rest of SpecForge will continue to work normally.

VSCode Extension

The Lilo Language Extension for VSCode provides

- Syntax Highlighting, Typechecking and Autocompletion for

.lilofiles - Satisfiability and Redundancy checking for specifications

- Support for visualizing monitoring results in Python notebooks

Installation

The SpecForge VSCode extension can be installed in two ways:

From the VSCode Marketplace

Install the extension directly from the Visual Studio Marketplace or search for “SpecForge” in VSCode’s extensions tab (Ctrl+Shift+X or Cmd+Shift+X).

From VSIX File

Alternatively, you can install from a VSIX file (included in releases):

- Open VSCode’s extensions tab (Ctrl+Shift+X or Cmd+Shift+X), click on the three dots at the top right, and select

Install from VSIX... - Open VSCode’s command palette (Ctrl+Shift+P or Cmd+Shift+P), type

Extensions: Install from VSIX..., and select the.vsixfile

Important: Ensure the extension version matches your SpecForge server version. Version mismatches may cause compatibility issues.

Usage and Configuration

For the extension to work, the SpecForge server must be accessible. There are two ways to connect:

-

Managed server (

specforge.spawnServer): Enable this setting and the extension will start and manage the SpecForge server process for you. Configurespecforge.serverPathif thespecforgebinary is not on yourPATH, andspecforge.preferredPortto choose a port. -

External server (default): Run the SpecForge server yourself (see Setting up SpecForge) and point the extension at it using the

specforge.apiBaseUrlsetting (default:http://localhost:8080).

Once the extension is installed and the server is running, it should automatically be working on .lilo files, and in relevant Python notebooks.

The VSCode workspace should be a directory which contains a specific lilo project. The extension will use the lilo.toml file at the root of the workspace to determine certain details about the project. Refer to the Python SDK setup guide to see an example of a project structure.

For a full reference of all available settings (LLM integration, server tuning, tracing, etc.), see the Configuration section of the VSCode Extension guide.

Setting up the Python SDK

The Python SDK is a python library which can be used to interact with SpecForge tools programmatically from within Python programs, including notebooks.

The Python SDK is packaged as a wheel file with the name specforge_sdk-x.x.x-py3-none-any.whl.

Refer to the SpecForge Python SDK guide for an overview of the SDK features and capabilities.

A Sample Walkthrough

The Python SDK can be installed directly using pip, or defined as a dependency via a build envionment such as poetry or uv.

We discuss below how such an environment can be setup using uv. If you prefer to use a different build system, the workflow should be similar.

The recommended way to use uv is to set up a pyproject.toml file for each project separately. This would allow you to manage dependencies on a per-project basis, and avoid conflicts between different projects. Here is what a typical directory structure would look like:

sample_project

├── data

│ ├── first60.csv

│ ├── last60.csv

│ └── sampled.csv

├── lib

│ └── specforge_sdk-0.5.7-py3-none-any.whl

├── scripts

│ └── script.py

├── .venv

│ └── (managed by uv)

├── lilo.toml

├── explore.ipynb

├── main.lilo

├── pyproject.toml

└── uv.lock

-

Install

uvon your operating system. See the uv installation guide for more details. -

Create a new project directory and navigate into it. Populate it with a

pyproject.tomlfile. -

Declare the dependencies in the

pyproject.tomlfile.- The wheel file for the Python SDK can be declared as a local dependency. Ensure that a correct path to the wheel file is provided.

- Features of SpecForge, such as the interactive monitor, can be used as a part of Python Notebooks. To do so, you may want to include

jupyterlabas a dependency as well. - Libraries such as

numpy,pandasandmatplotlibare frequently included for data processing and visualization. - Here is an example

pyproject.tomlfile:

[project] name = "sample-project" version = "0.1.0" description = "Sample Project for Testing SpecForge SDK" authors = [{ name = "Imiron Developers", email = "info@imiron.io" }] readme = "README.md" requires-python = ">=3.12" dependencies = [ "jupyterlab>=4.4.5", "pandas>=2.3.1", "matplotlib>=3.10.3", "numpy>=2.3.2", "specforge-sdk", ] [tool.uv.sources] specforge_sdk = { path = "lib/specforge_sdk-0.5.8-py3-none-any.whl" } -

Run

uv sync. This should create a.venvdirectory which would have the appropriate dependencies (including the correct version of python) installed.- Note that this

.venvis unique to this project. Each project should have its own.venvdirectory, meaning that there should be a separatepyproject.tomlfile for each project, and you would have to runuv syncfor each project separately.

- Note that this

-

Run

source .venv/bin/activateto use the Shell Hook with access topython. You can confirm that this has been configured correctly as follows.$ source .venv/bin/activate (sample-project) $ which python /path/to/project/sample-project/.venv/bin/python -

Now, you can browse the example notebooks. Make sure that your notebook is connected to the kernel in the

.venv. This is usually configured automatically, but can also be done manually. To do so, runjupyter serverand copy and paste the server URL in the kernel settings in the VSCode notebook viewer.

Project Configuration

Lilo projects can use an optional lilo.toml configuration file at the project root.

For getting started, you can skip this configuration entirely and simply place your .lilo specification files and data files directly in the root of your project. SpecForge will work with sensible defaults.

When you do use lilo.toml, if the file or any of its fields are missing, sensible defaults apply. The Python SDK and the VS Code extension read this file and apply the semantics accordingly.

The configuration file is useful for:

- Setting a project name and custom source path (default: project root or

src/) - Customizing language behavior (interval mode, freeze)

- Adjusting diagnostics settings (consistency, redundancy, optimize, unused defs) and their timeouts

- Registering

system_falsifierentries for falsification analysis

Below are the schema and defaults, followed by a complete example.

Schema and defaults

Top-level keys and their defaults when omitted:

-

projectname(string).- Default:

"". - On init: set to the provided name; otherwise to the name of the project root directory.

- Default:

source(path string). Default:"src/"

-

languageinterval.mode(string). Supported:"static". Default:"static"freeze.enabled(bool). Default:true

-

diagnosticsconsistency.enabled(bool). Default:trueconsistency.timeouts— SMT solver timeouts for consistency checks:named(seconds, float). Timeout per individual named specification. Default:0.5system(seconds, float). Timeout for the whole-system consistency check (all specs checked together). Default:1.0

redundancy.enabled(bool). Default:trueredundancy.timeouts— SMT solver timeouts for redundancy checks:named(seconds, float). Timeout per individual named specification. Default:0.5system(seconds, float). Timeout for the whole-system redundancy check (all specs checked together). Default:1.0

optimize.enabled(bool). Default:trueunused_defs.enabled(bool). Default:true

-

[[system_falsifier]](array of tables, optional)- Each entry:

name(string),system(string),script(string) - If absent or empty, the key is omitted from the file and treated as an empty list

- Each entry:

Default file:

[project]

name = ""

source = "src/"

Example lilo.toml

An example project with overrides.

[project]

name = "my-specs"

source = "src/"

[language]

freeze.enabled = true

interval.mode = "static"

[diagnostics.consistency]

enabled = true

[diagnostics.consistency.timeouts]

named = 5.0

system = 10.0

[diagnostics.optimize]

enabled = true

[diagnostics.redundancy]

enabled = false

[diagnostics.unused_defs]

enabled = false

[[system_falsifier]]

name = "Psitaliro ClimateControl Falsifier"

system = "climate_control"

script = "falsifiers/falsify_climate_control.py"

[[system_falsifier]]

name = "Psitaliro ALKS falisifier"

system = "lane_keeping"

script = "falsifiers/alks.py"

A Whirlwind Tour

This section is a quick introduction to SpecForge’s main capabilities through a hands-on example. We’ll explore how to write specifications in the Lilo language and analyze them using SpecForge’s VSCode extension.

The Lilo Language: A Brief Introduction

Lilo is an expression-based temporal specification language designed for hybrid systems. Here are the key concepts:

Primitive Types: Bool, Int, Float, and String

Operators: Standard arithmetic (+, -, *, /), comparisons (==, <, >, etc.), and logical operators (&&, ||, =>)

Temporal Operators: Lilo’s distinguishing feature is its rich set of temporal logic operators:

always φ:φis true at all future timeseventually φ:φis true at some future timepast φ:φwas true at some past timehistorically φ:φwas true at all past times

These operators can be qualified with time intervals, e.g., eventually[0, 10] φ means φ becomes true within 10 time units. More operators are available.

Systems: Lilo specifications are organized into systems that group together:

signals: Time-varying input values (e.g.,signal temperature: Float)params: Non-temporal parameters that are not time-varying (e.g.,param max_temp: Float)types: Custom types for structured datadefinitions: Reusable definitions and helper functionsspecifications: Requirements that should hold for the system

A system file begins with a system declaration like system temperature_control and contains all the declarations for that system.

For a comprehensive guide to the language, see the Lilo Language chapter.

Running Example

We’ll use a temperature control system as our running example. This example project is available in the releases. The system monitors temperature and humidity sensors, with specifications ensuring values remain within safe ranges:

system temperature_sensor

// Temperature Monitoring specifications

// This spec defines safety requirements for a temperature sensor system

import util use { in_bounds }

signal temperature: Float

signal humidity: Float

param min_temperature: Float

param max_temperature: Float

#[disable(redundancy)]

spec temperature_in_bounds = in_bounds(temperature, min_temperature, max_temperature)

spec always_in_bounds = always temperature_in_bounds

// Humidity should be reasonable when temperature is in normal range

spec humidity_correlation = always (

(temperature >= 15.0 && temperature <= 35.0) =>

(humidity >= 20.0 && humidity <= 80.0)

)

// Emergency condition - temperature exceeds critical thresholds

spec emergency_condition = temperature < 5.0 || temperature > 45.0

// Recovery specification - after emergency, system should stabilize

spec recovery_spec = always (

emergency_condition =>

eventually[0, 10] (temperature >= 15.0 && temperature <= 35.0)

)

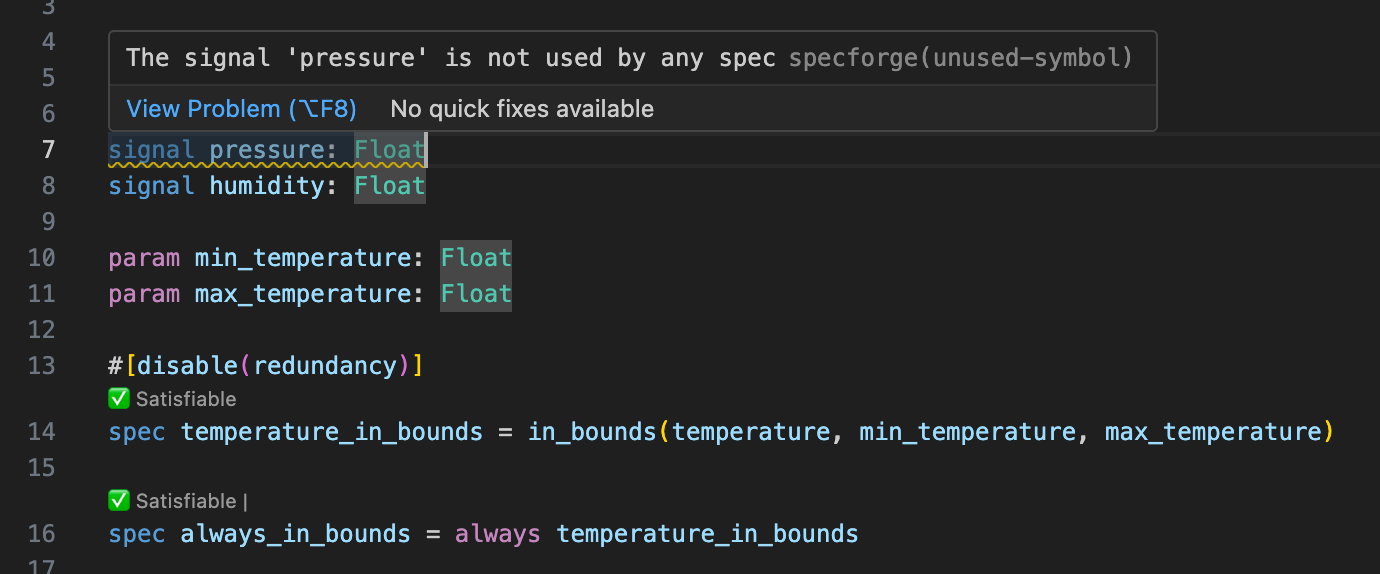

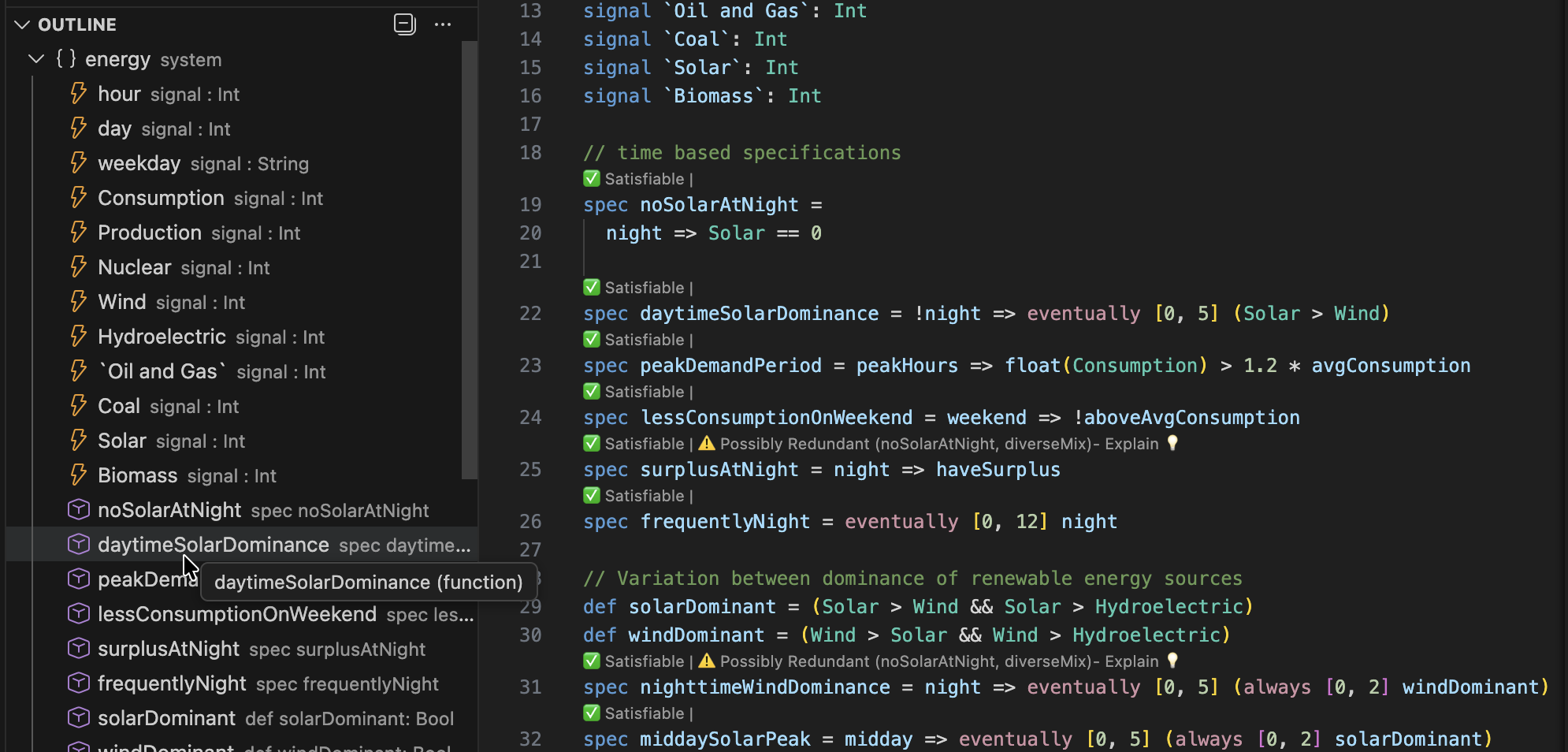

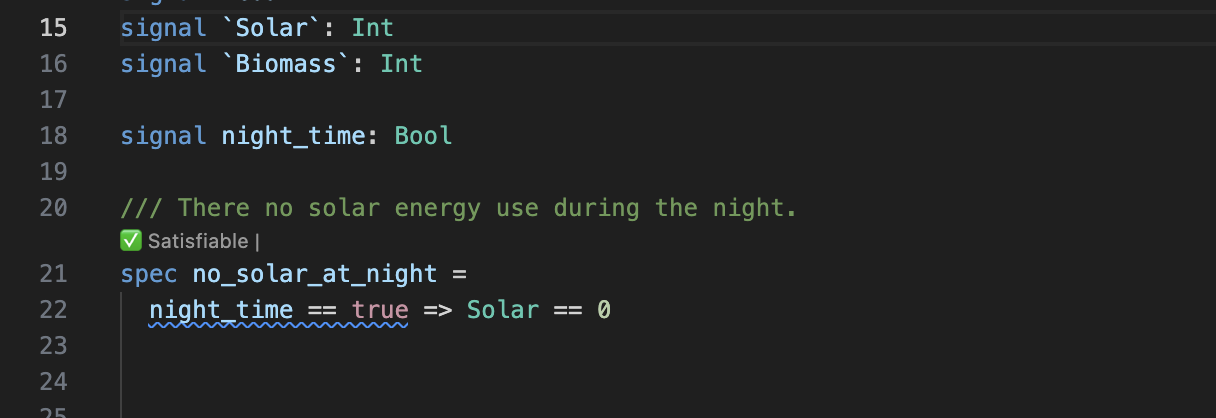

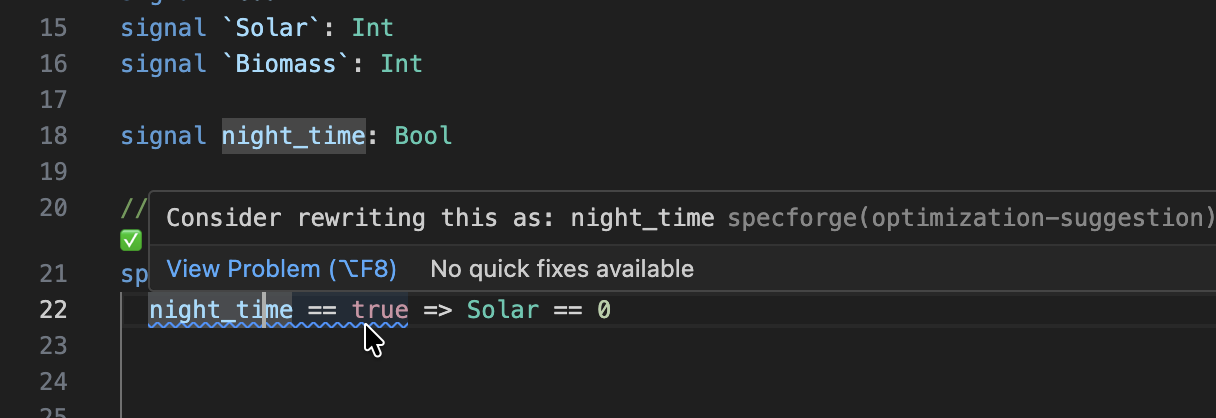

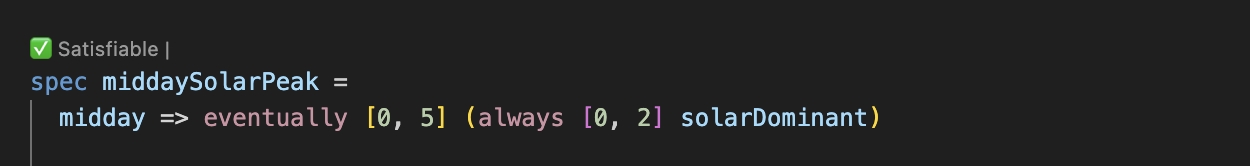

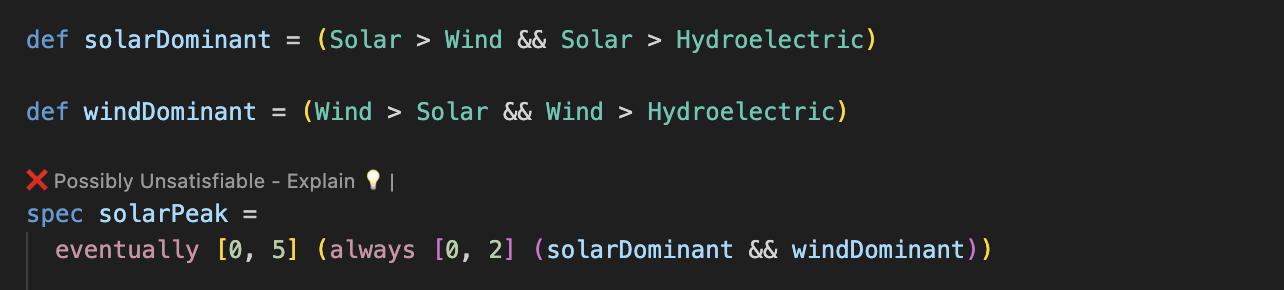

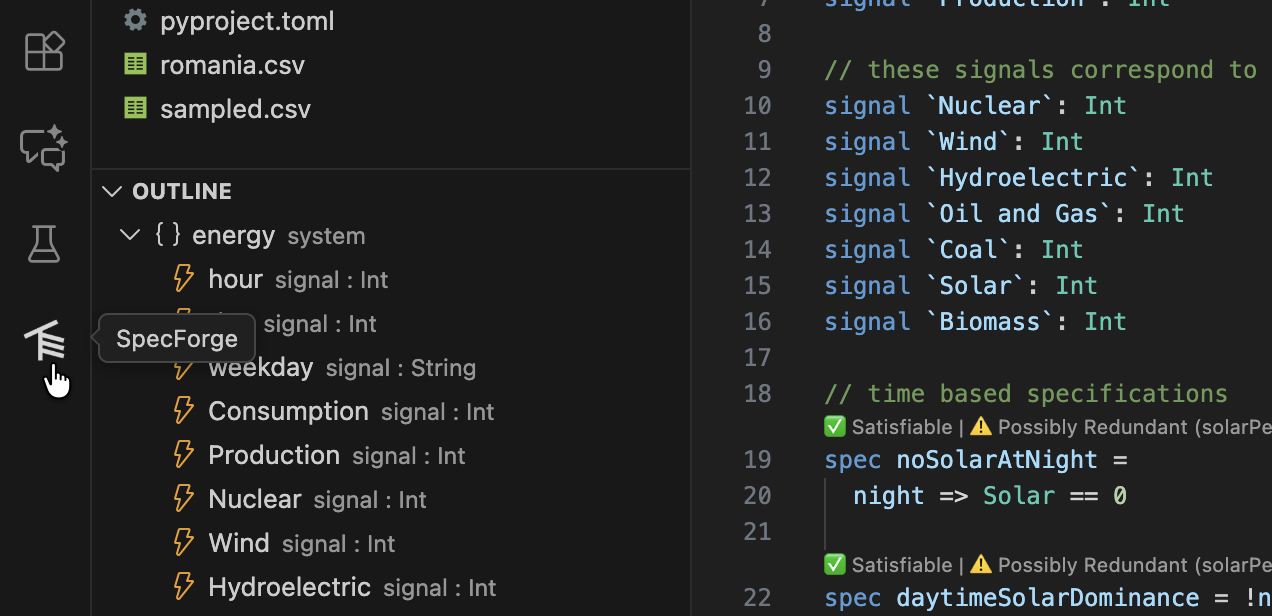

The VSCode extension provides support for writing Lilo code, syntax highlighting, type-checking, warnings, spec satisfiability, etc.:

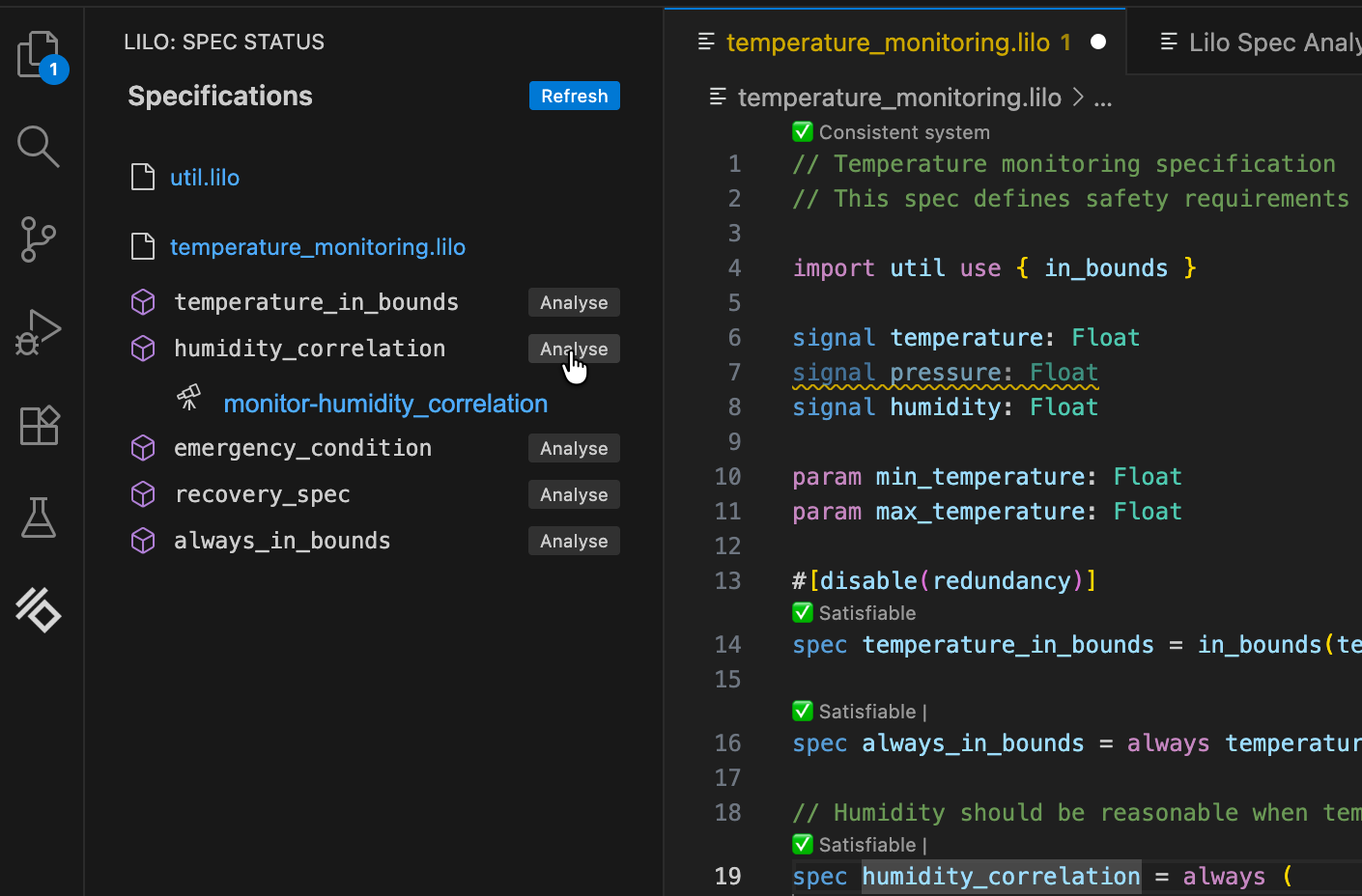

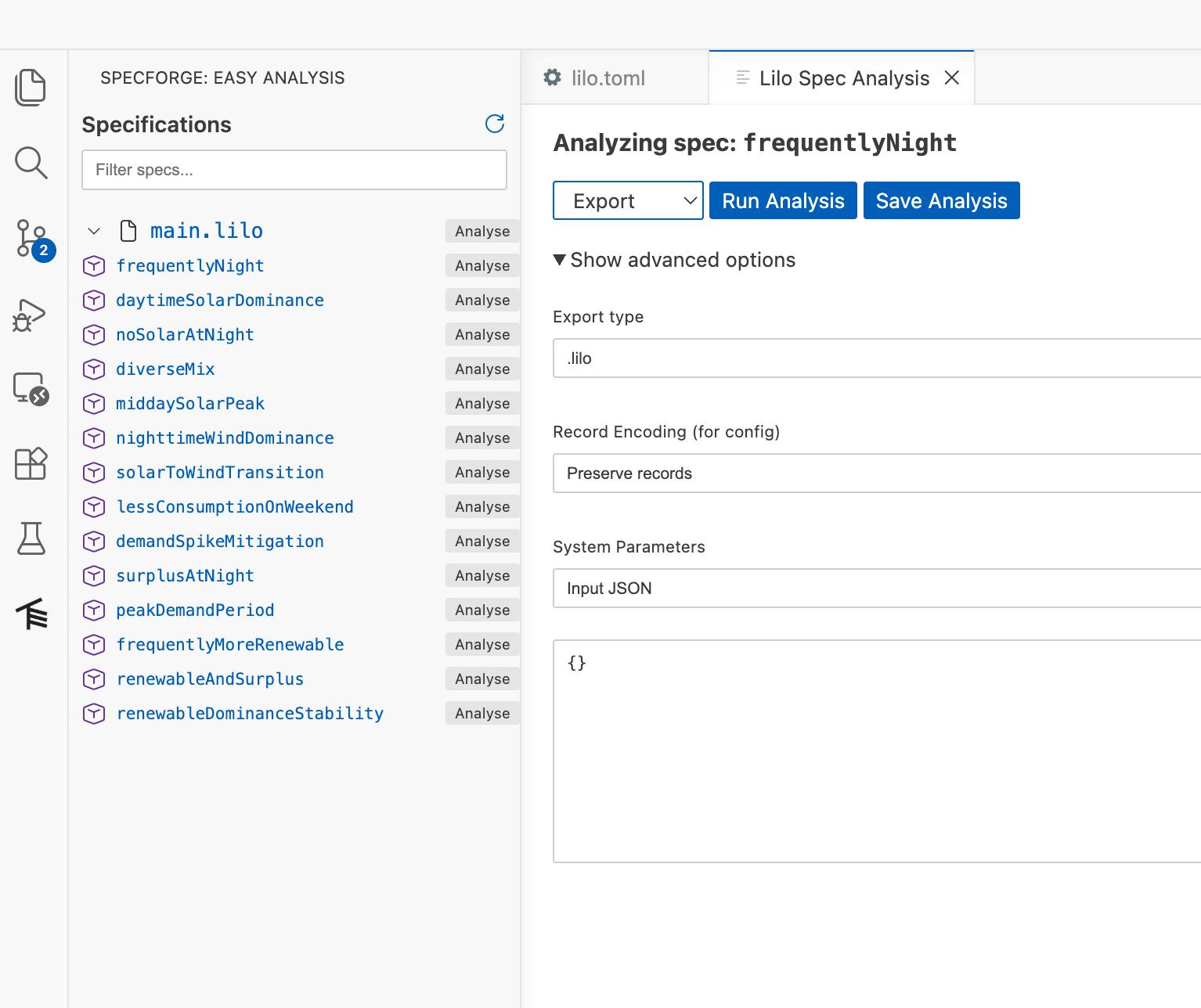

Spec Analysis

Once you’ve written specifications for your system, the SpecForge VSCode extension provides various analysis capabilities:

- Monitor: Check whether recorded system behavior satisfies specifications

- Exemplify: Generate example traces that satisfy specifications

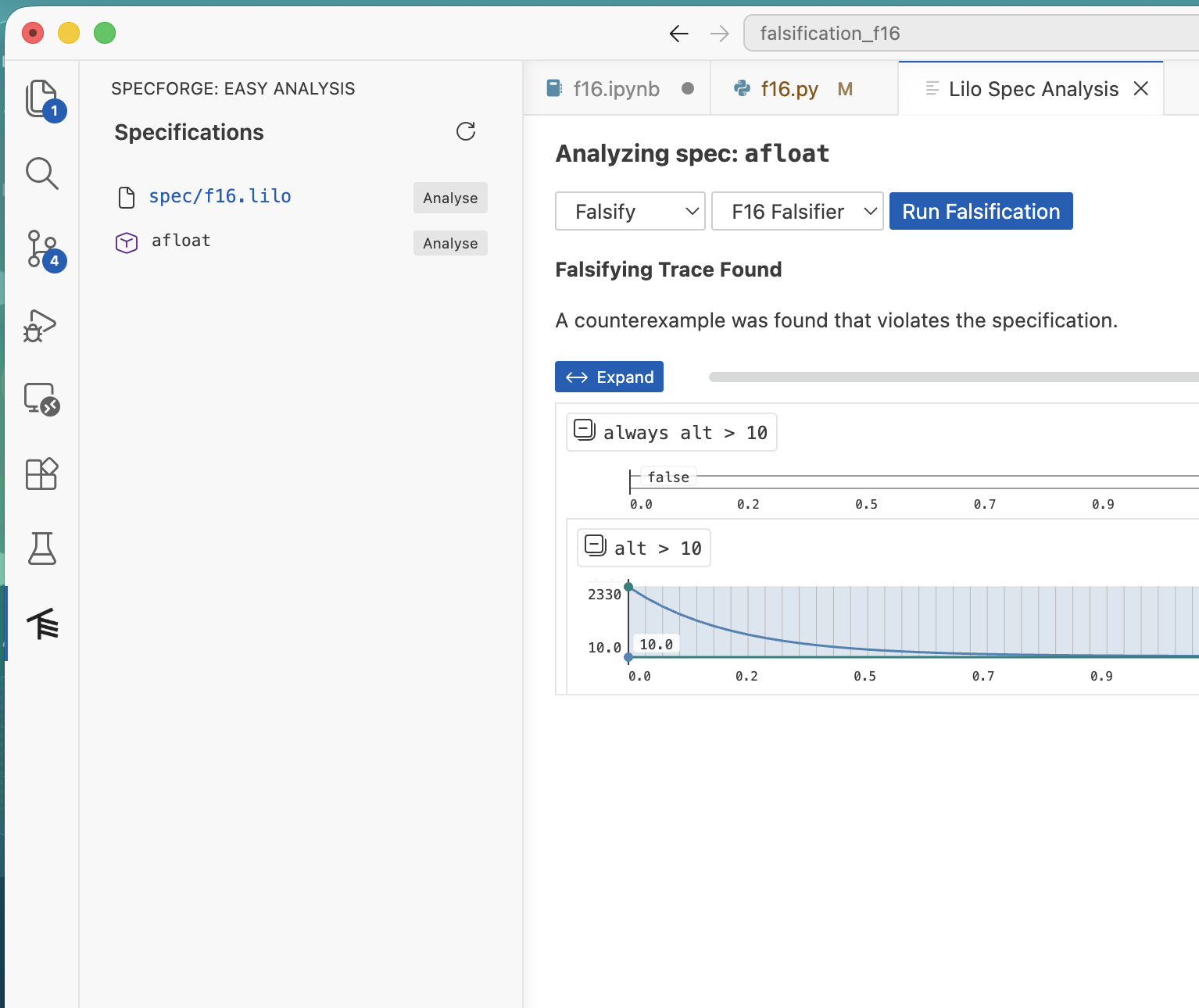

- Falsify: Search for counterexamples that violate specifications, relative to some model

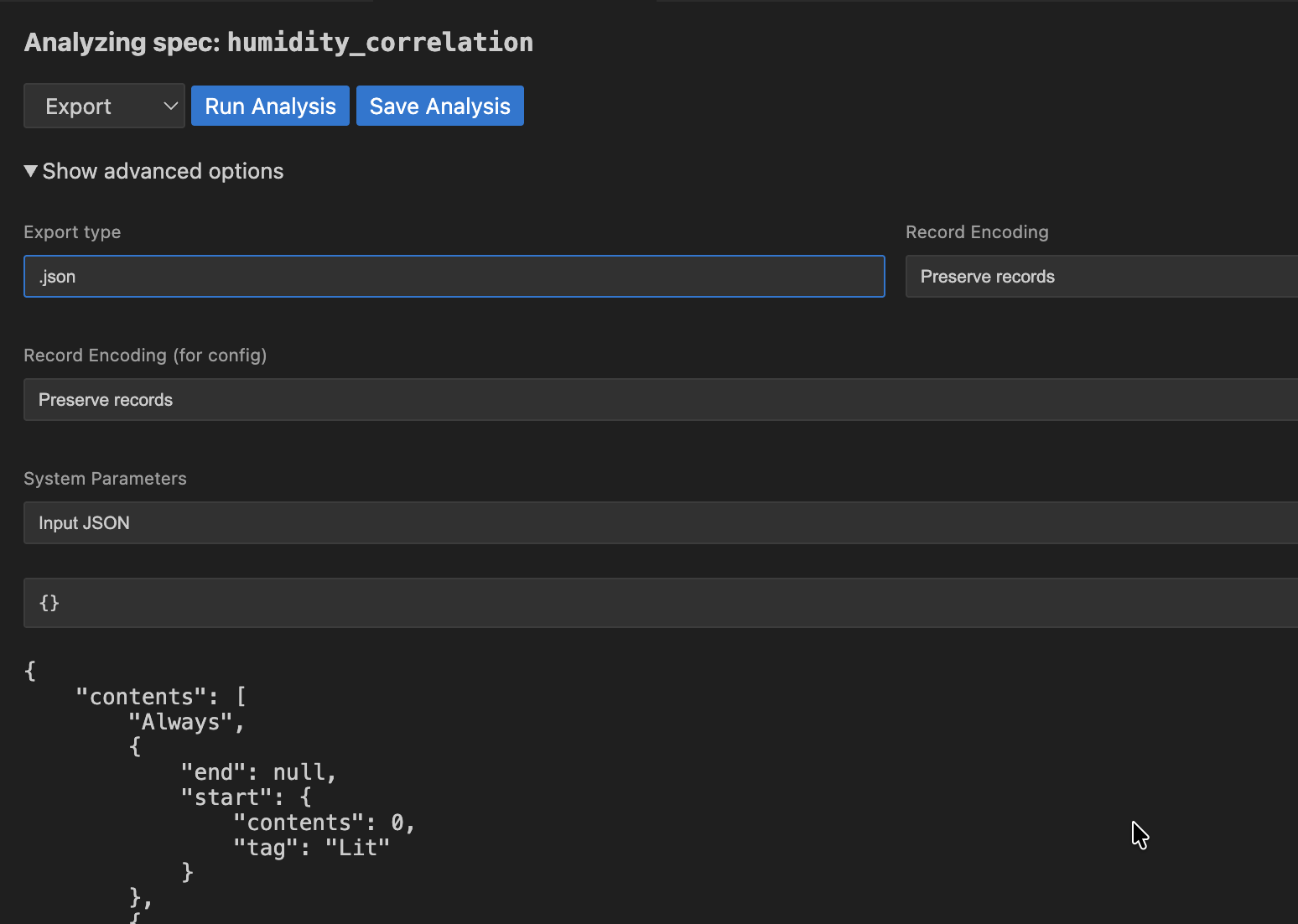

- Export: Convert specifications to other formats (

.json,.lilo, etc.) - Animate: Visualize specification behavior over time

This can be done directly from within VSCode, or from within in a Jupyter notebook using the Python SDK. We will perform analyses directly in VSCode here. The VSCode guide details all features in greater depth.

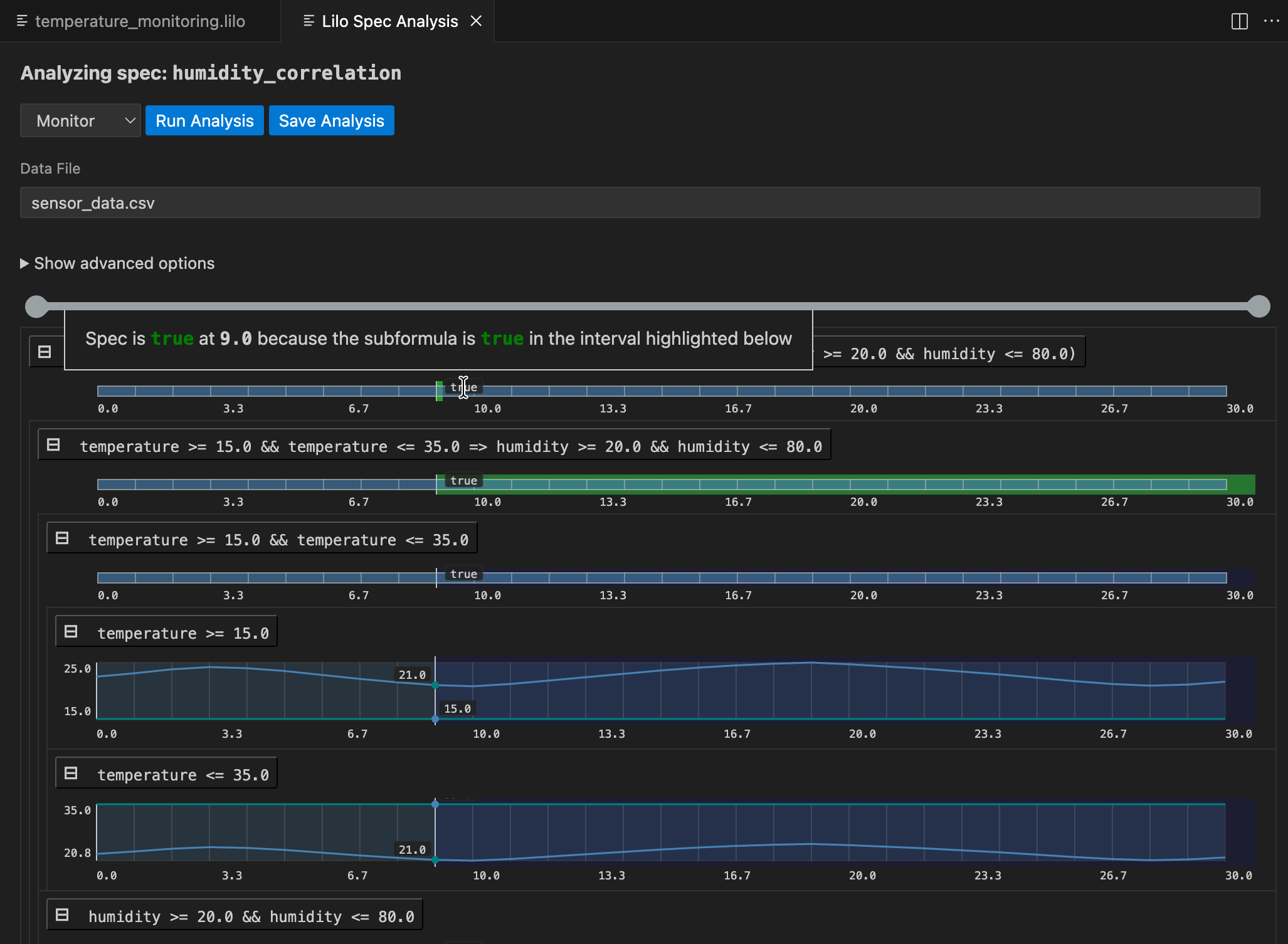

Monitoring

Monitoring checks whether actual system behavior, recorded in a data file, satisfies your specifications. You provide recorded trace data, and SpecForge evaluates a specification against it.

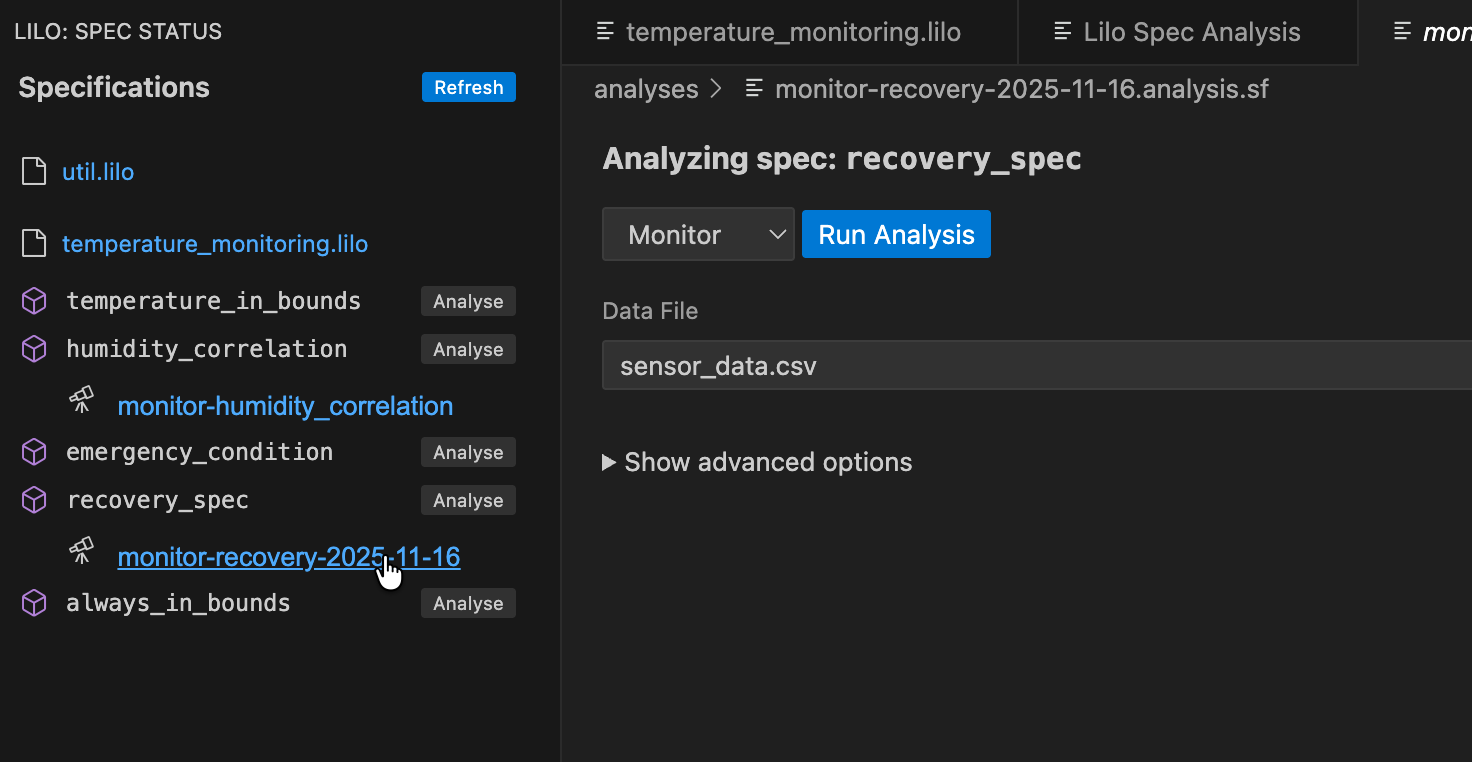

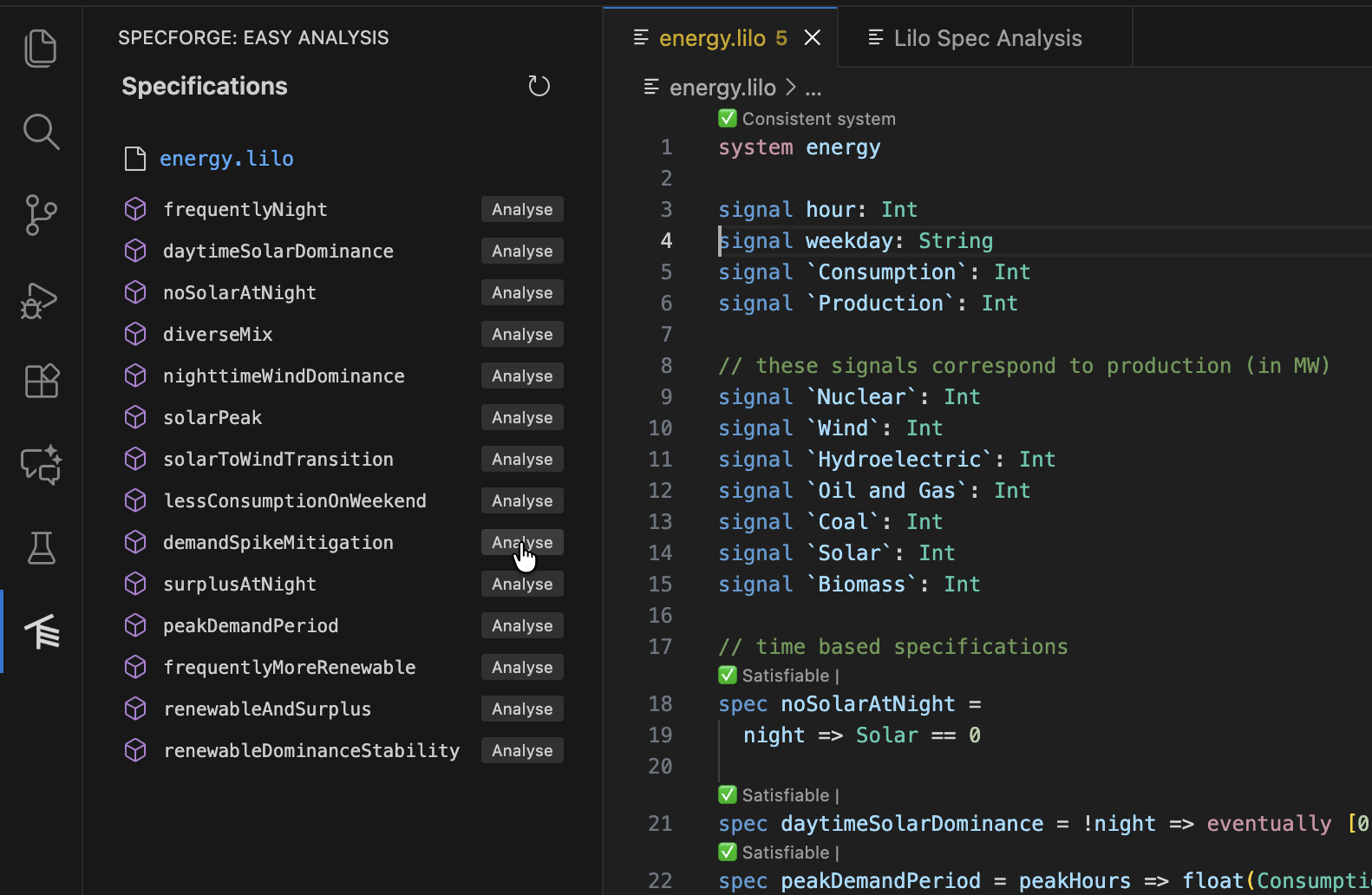

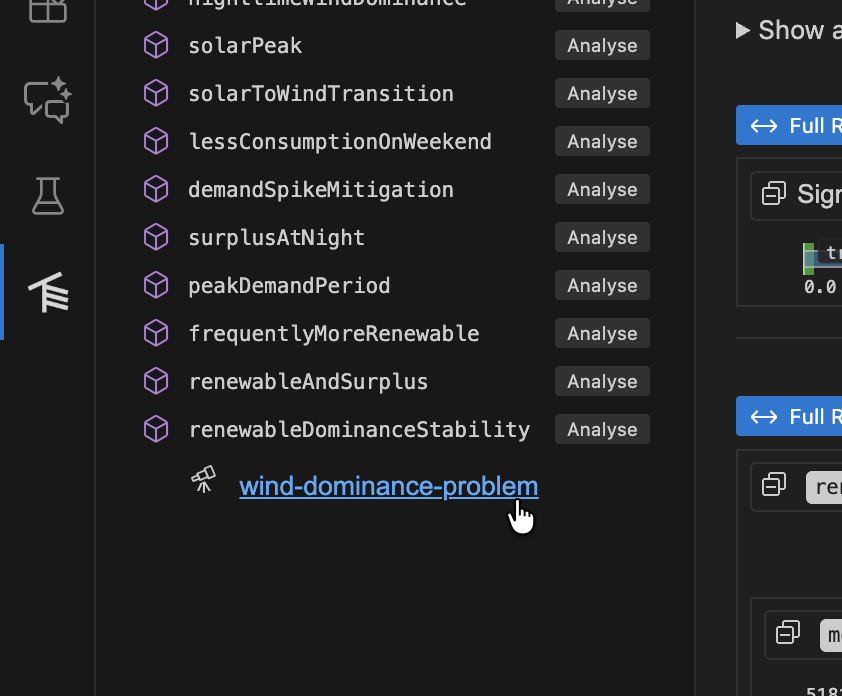

Navigate to the spec selection screen, and click the Analyse button for the spec you want to monitor.

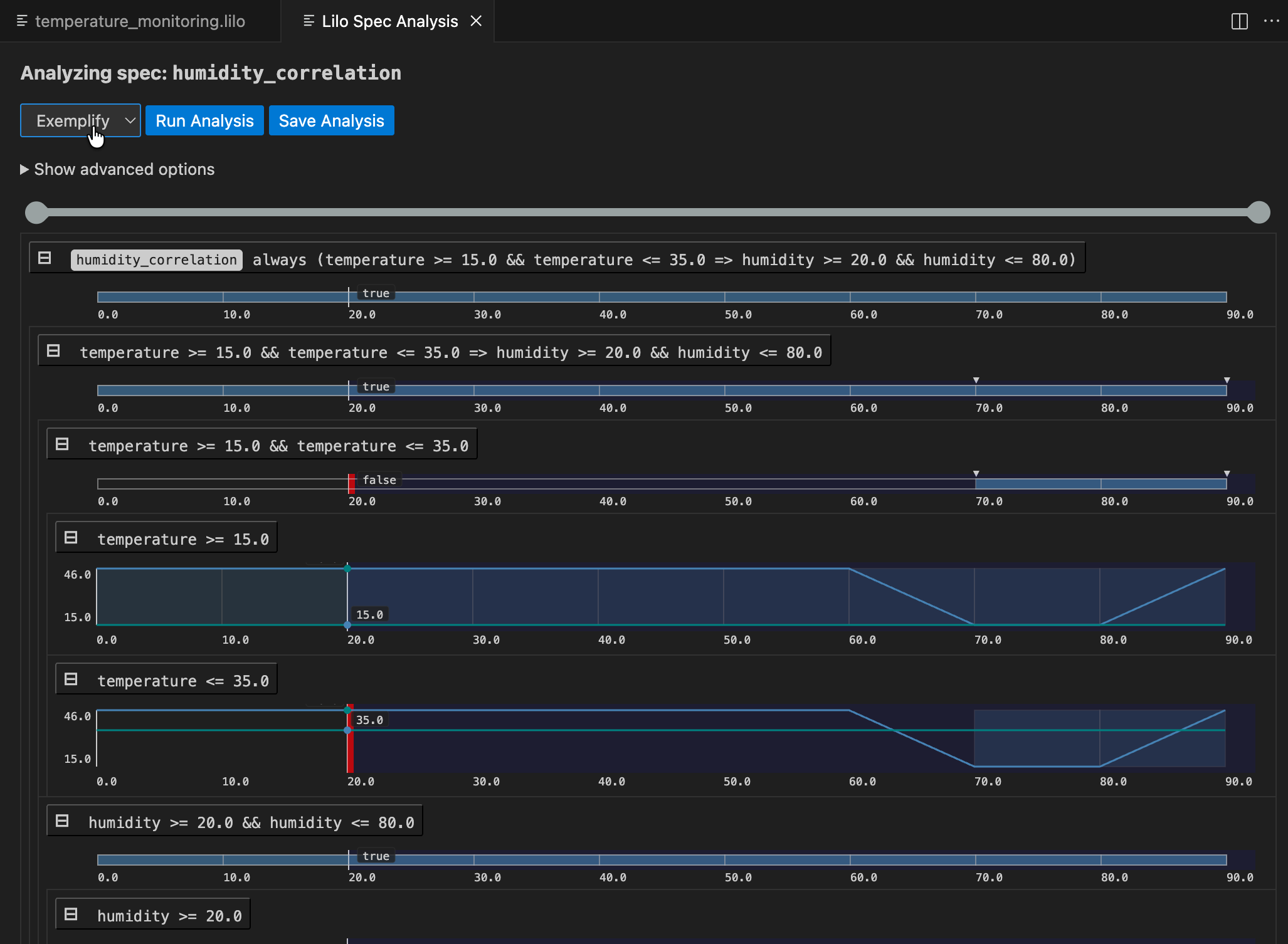

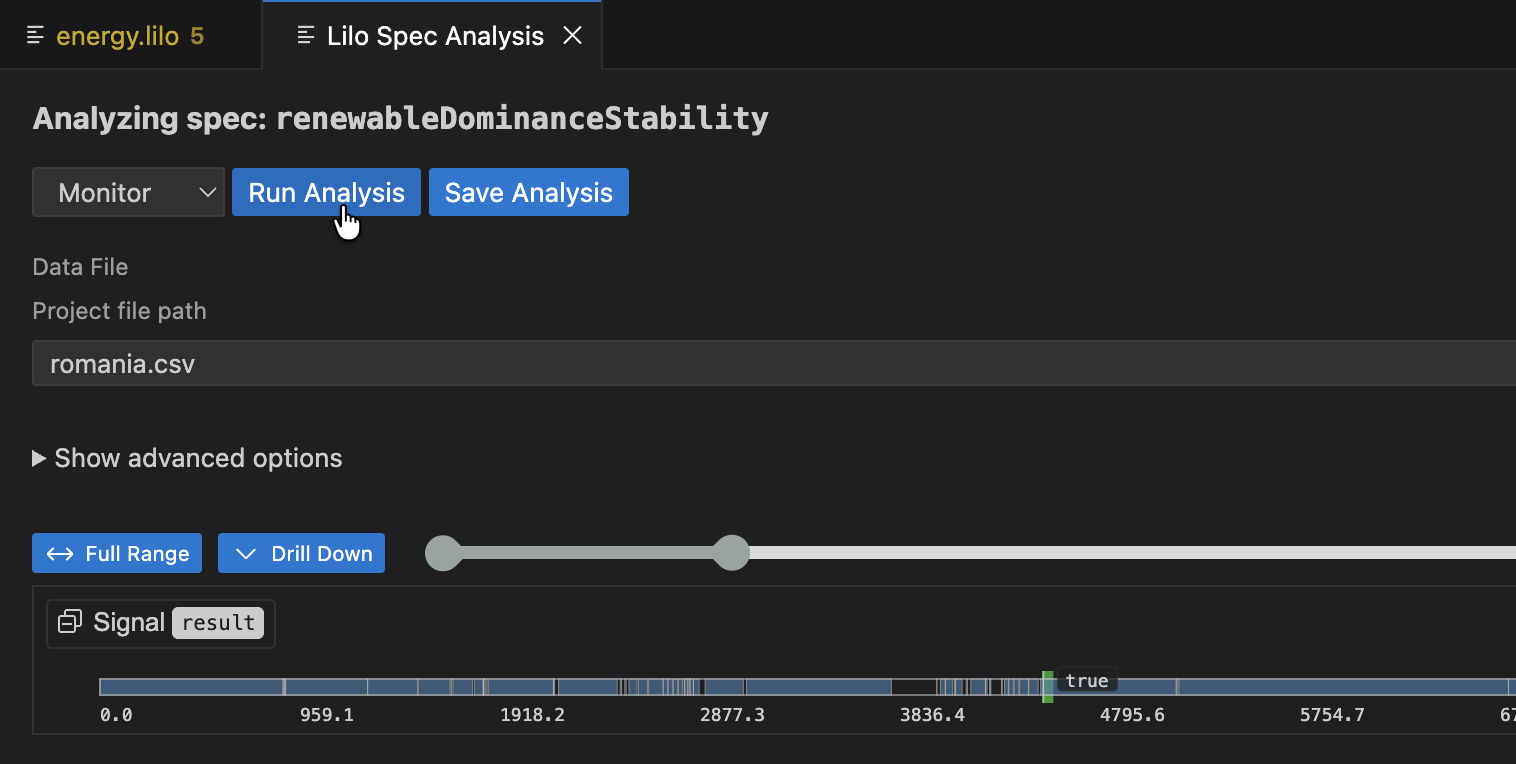

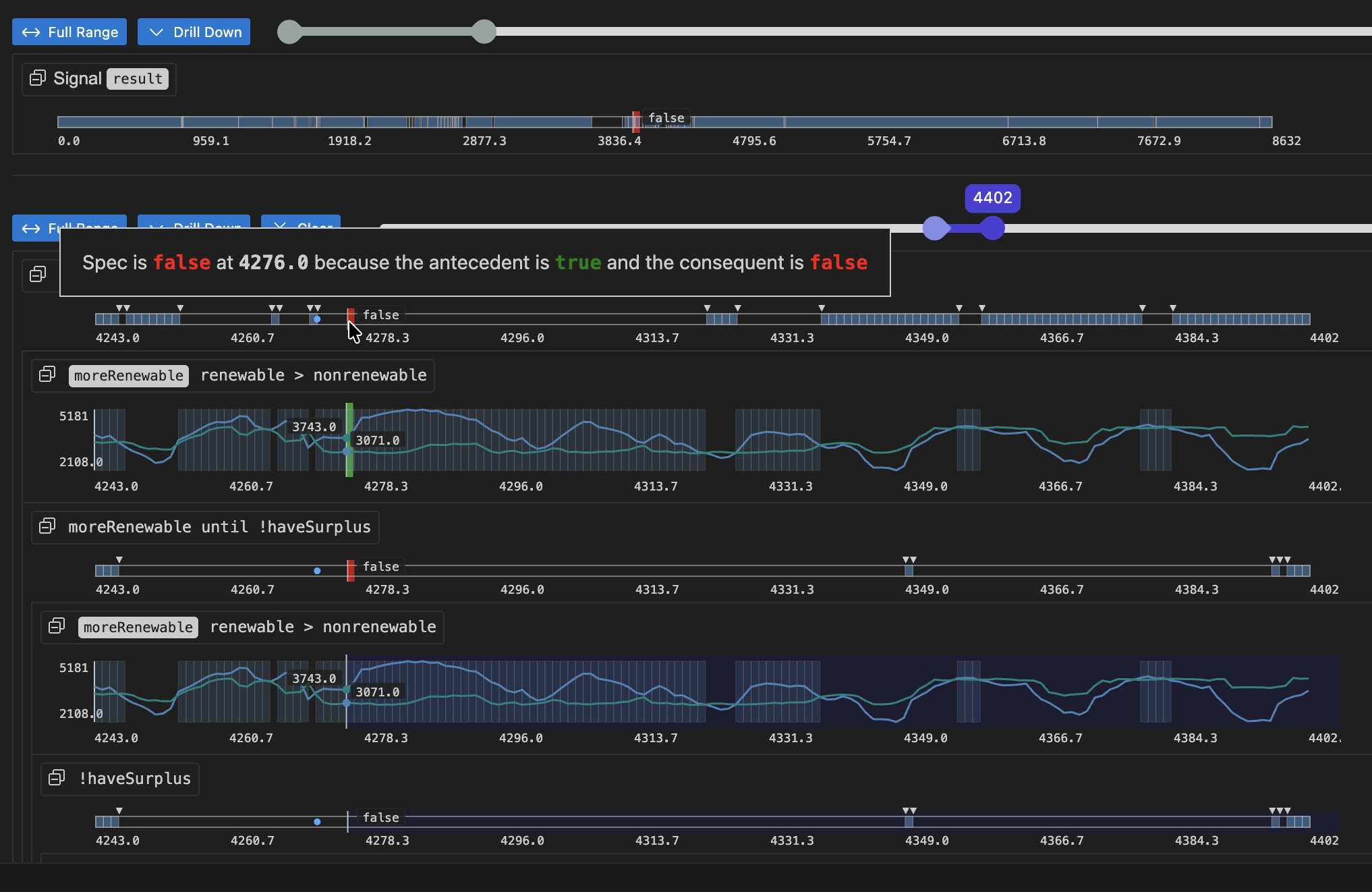

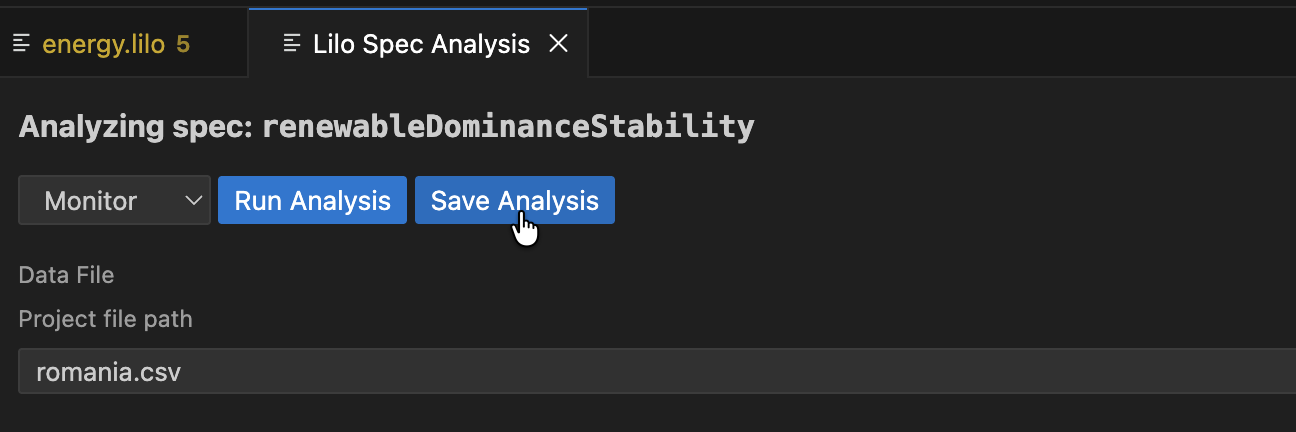

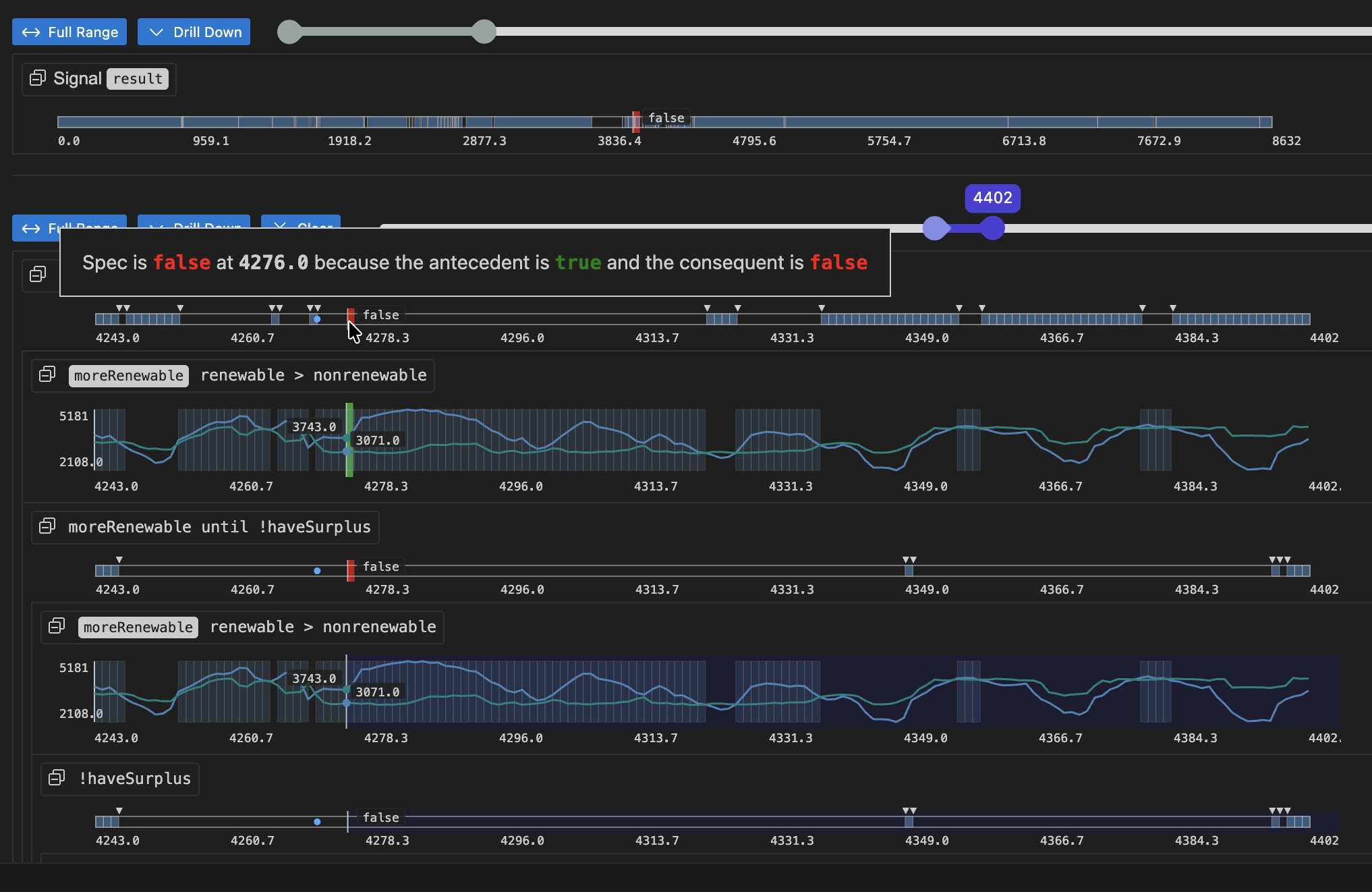

After selecting a data file from the dropdown menu, click Run Analysis. The result is an analysis monitoring tree for the specification:

The result for the whole specification is shown at the top. Below this, you can drill down into sub-expressions of the specification, to understand what makes the spec true or false at any given time. Hovering over any of the signals will show a popup with an explanation of the result at that point in time, and will highlight relevant segments of sub-expression result signals.

An analysis can be saved. To do so, click the Save Analysis button, and choose a location to save the analysis. You can then navigate to this analysis file and open it again in VSCode. The analysis will also show up in the specification status menu, under the relevant spec.

Exemplification

The Exemplify analysis generates example traces that demonstrate satisfying behavior. This is useful for:

- Understanding what valid system behavior looks like

- Testing other components with realistic data

- Creating animations

If the exemplified data does not behave as expected, the specification might be wrong and need to be corrected. Exemplification can thus be used as an aid when authoring specifications.

Falsification

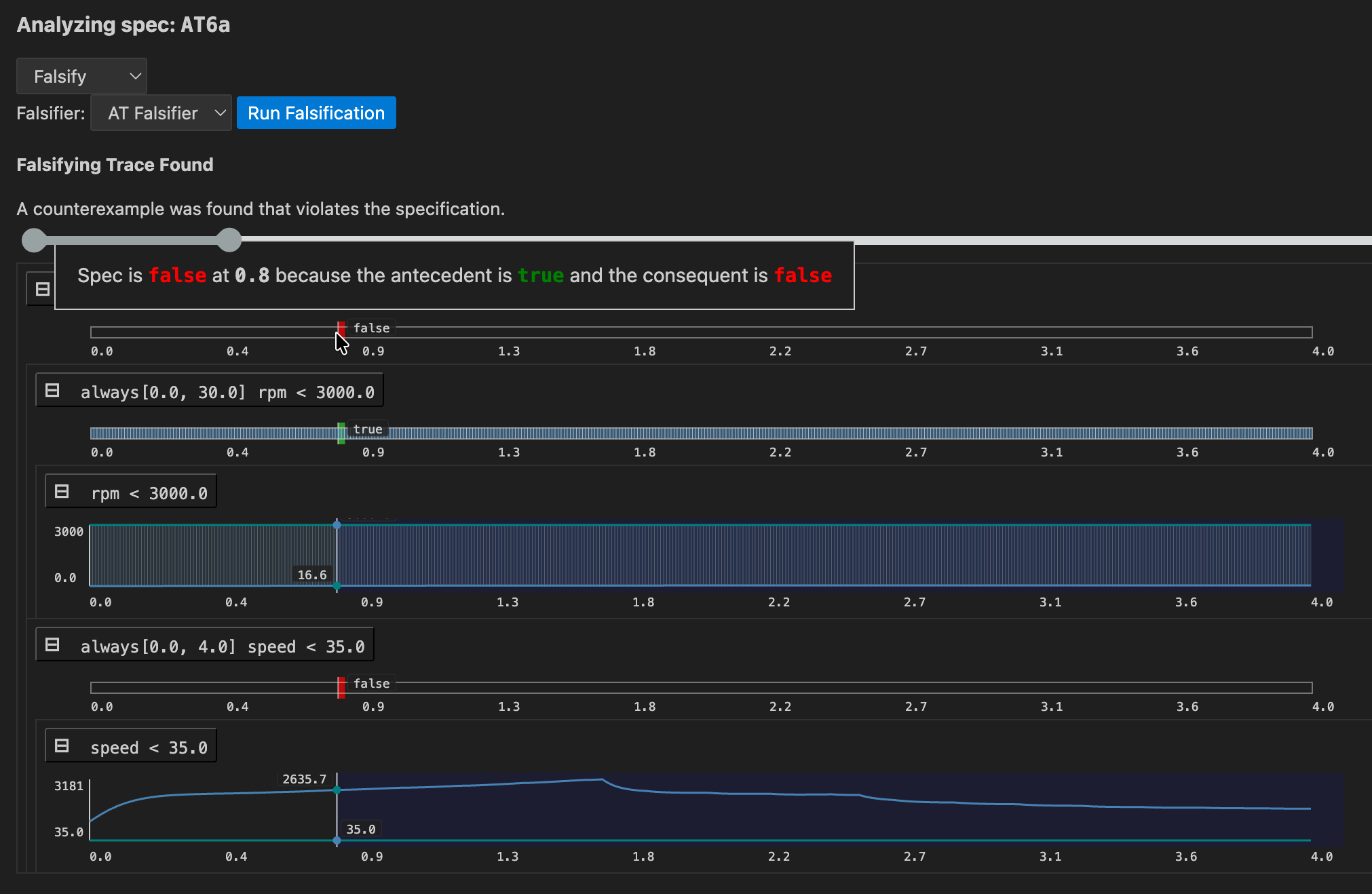

If a model for the system is available, falsification can be used to see if the model behaves as expected, that is, according to specification.

First a falsifier must be registered in lilo.toml, e.g.

name = "automatic-transmission"

source = "spec"

[[system_falsifier]]

name = "AT Falsifier"

system = "transmission"

script = "transmission.py"

Once this is done, the falsifier will show up in the Falsify analysis menu. If a falsifying signal is found, the monitoring tree is show, to help understand how the model went wrong:

Export

Export converts your specifications to other formats, to be used in other tools. For example, if you want to export your specification to JSON format, choose .json as the Export type.

Next Steps

This tour covered the basics of what SpecForge can do. The following chapters dive deeper into:

- The full Lilo language (Lilo Language)

- System definitions and composition (Systems)

- The Python SDK for programmatic access (Python SDK)

Lilo Language

Lilo is a formal specification language designed for describing, verifying and monitoring the behavior of complex, time-dependent systems.

Lilo allows you to:

- Write expressions using a familiar syntax with powerful temporal operators for defining properties over time.

- Define data structures using records to model your system’s data.

- Structure your specifications using systems.

Language Basics

Types and Expressions

Lilo is an expression-based language. This means that most constructs, from simple arithmetic to complex temporal properties, are expressions that evaluate to a time-series value. This section details the fundamental building blocks of Lilo expressions.

Comments

Comment blocks start with /* and end with */. Everything between these markers is ignored.

A line comment start with //, and indicates that the rest of the line is a comment.

Docstrings start with /// instead of //, and attach documentation to various language elements.

Primitive types

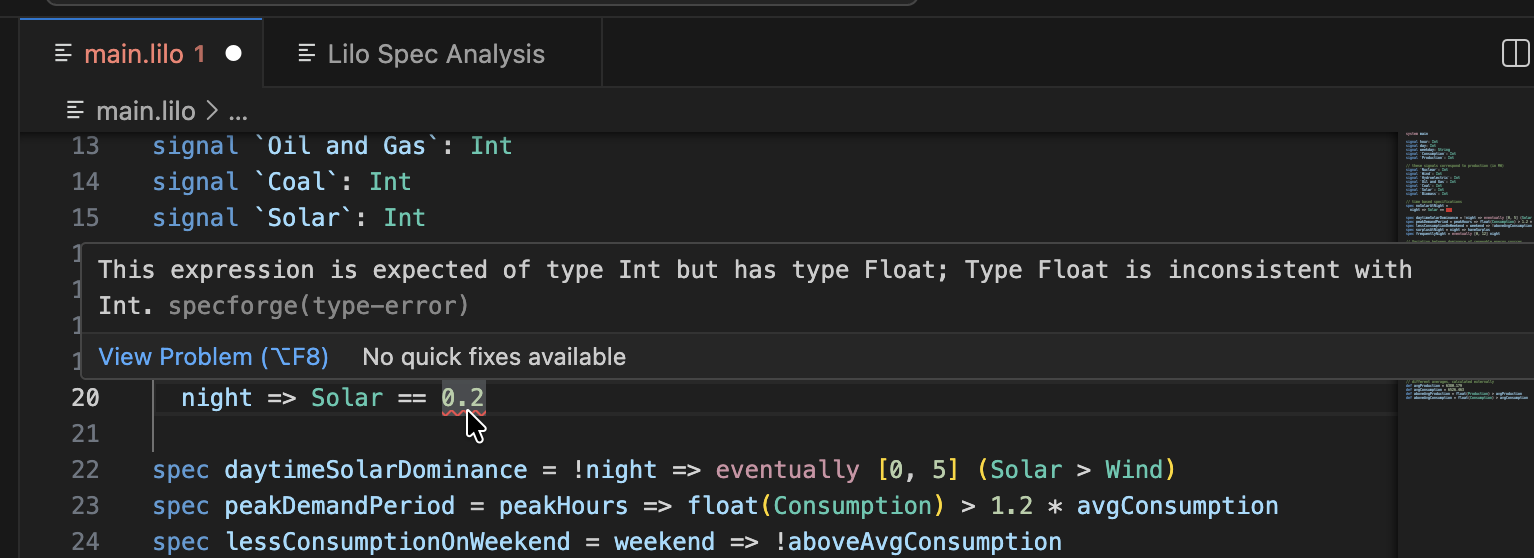

Lilo is a typed language. The primitive types are:

Bool: Boolean values. These are writtentrueandfalse.Int: Integer values, e.g.42.FloatFloating point values, e.g.42.3.String: Text strings, written between double-quotes, e.g."hello world".

Type errors are signaled to the user like this:

def x: Float = 1.02

def n: Int = 42

def example = x + n

The code blocks in this documentation page can be edited, for example try changing the type of n to Float to fix the type error.

Units of Measure

Lilo supports units of measure for Float values. Units are written in angle brackets <...> immediately after a literal.

Basic Units

A simple unit is written as an identifier inside angle brackets:

1.0<cm>

100.0<km/h>

A unit changes the type of the literal from Float to a dimensioned type. In the previous example, the type is changed from Float to Float<cm> or Float<km/h>.

Compound Units

Units can be combined using operators. The literal 1 represents a dimensionless unit:

60.0<1/s>

-

Ratio (

/):15.0<m/s> -

Product (

*):50.0<m*m> -

Exponentiation (

^):100.0<m^2>9.81<m*s^-2>

Operator Precedence and Associativity

Unit operators follow standard mathematical precedence rules:

- Exponentiation (

^) has the highest precedence and binds tightly to the immediately preceding unit. - Product (

*) and ratio (/) have equal precedence and associate left-to-right.

This means m/s*kg is interpreted as (m/s)*kg, and m*s^-2 means m*(s^-2), not (m*s)^-2.

Parentheses for Grouping

Parentheses can be used to override the default precedence and associativity:

1.0<1/(kg*m)>

Operations on Lieterals with Units

You can perform addition, subtraction and comparison on literals with units, such as:

100.0<km> + 10.0<km>

50.2<km> - 3.0<km>

6.5<km> > 3.6<km>

When performing these operations, the units of the first and second arguments must be the same. Otherwise, a type error will occur.

You can perform multiplication or division even if the units of the first and second arguments are different, such as:

100.0<km> * 2.0<s>

100.0<km> / 2.0<s>

These are evaluated as 200.0<km*s> and 50.0<km/s>, respectively.

Inference

Units can be inferred. In the following example, the type of speed is inferred to be Float<m/s>.

def f(speed) = speed * 5.0<s> <= 10.0<m>

Identification

Units are identified as strings. Thus, for example, m and km have no relation as units. To relate them, for example, define a function to convert from km to m.

def km_to_m(kilometre: Float<km>) = kilometre * 1000.0<m/km>

Declaring Units

Units used in type annotations must be declared with the unit keyword:

unit km

unit h

unit s

Once declared, units can be used in signal and definition type annotations:

signal speed: Float<km/h>

signal distance: Float<km>

Operators

Lilo uses the following operators, listed in order of precedence (from highest to lowest).

-

Prefix negation:

-xis the additive inverse ofx, and!xis the negation ofx. -

Multiplication and division:

x * y,x / y. -

Addition and subtraction:

x + yandx - y. -

Numeric comparisons:

==: equality!=: non-equality>=: greater than or equals<=: less than or equals>: greater than (strict)<: less than (strict) Comparisons can be chained, in a consistent direction. E.g.0 < x <= 10means the same thing as0 < x && x <= 10.

-

Temporal operators (See Semantics of Temporal Operators for details):

always φ:φis true at all times in the future.eventually φ:φis true at some point in the future.past φ:φwas true at some time in the past.historically φ:φwas true at all times in the past.will_change φ:φchanges value at some point in the future.did_change φ:φchanged value at some point in the past.φ since ψ:φis true at all points in the past, from some point whereψwas true.φ until ψ:φis true at all points in the future untilψbecomes true.φ releases ψ: shortcut for!(!φ until !ψ).next f: The value off(not necessarily boolean) at the next (discrete) time point, or retains its value if there is no next time point.previous f: The value off(not necessarily boolean) at the previous (discrete) time point, or retains its value if there is no previous time point.previous_with v f: The value off(not necessarily boolean) at the previous (discrete) time point, orvif there is no previous time point.next_with v f: The value off(not necessarily boolean) at the next (discrete) time point, orvif there is no next time point.

One can chain unary temporal operators without parentheses, e.g,

always eventually (x < 0).Temporal operators can be qualified with intervals:

always [a, b] φ:φis true at all times betweenaandbtime units in the future.eventually [a, b] φ:φis true at some point betweenaandbtime units in the future.φ until [a, b] ψ:φis true at all points between now and some point betweenaandbtime units in the future untilψbecomes true.φ releases [a, b] ψ: shortcut for!(!φ until [a, b] !ψ).- Similar interval qualifications apply to other temporal operators.

- One can use

infinityin intervals:[0, infinity].

-

Sliding Window Operators

max_future [0, a] φ: the maximum value ofφin the nextatime units.min_future [0, a] φ: the minimum value ofφin the nextatime units.max_past [0, a] φ: the maximum value ofφin the pastatime units.min_past [0, a] φ: the minimum value ofφin the pastatime units.- Note that the interval must be of the form

[0, a]. - Similar to other temporal operators, one can omit the interval to mean

[0, infinity].

-

Conjunction:

x && y, bothxandyare true. -

Disjunction:

x || y, one ofxoryis true. -

Implication and equivalence:

x => y: ifxis true, thenymust also be true.x <=> y:xis true if and onlyyis true.

Note that prefix operators cannot be chained. So one must write -(-x), or !(next φ).

Built-in functions

There are built-in functions:

-

floatwill produce aFloatfrom anInt:def n: Int = 42 def x: Float = float(n) -

timewill return the current time of the signal. -

sqrtreturns the square root of a numeric value. The argument must be anIntor dimensionlessFloat:sqrt(x) -

absreturns the absolute value of a numeric value. The argument must be anIntorFloat, and units are preserved:abs(x) -

maxreturns the maximum of two or more numeric values. All arguments must have the same type and units:max(x, y) -

minreturns the minimum of two or more numeric values. All arguments must have the same type and units:min(x, y)

Conditional Expressions

Conditional expressions allow a specification to evaluate to different values based on a boolean condition. They use the if-then-else syntax.

if x > 0 then "positive" else "non-positive"

A key feature of Lilo is that if/then/else is an expression, not a statement. This means it always evaluates to a value, and thus the else branch is mandatory.

The expression in the if clause must evaluate to a Bool. The then and else branches must produce values of a compatible type. For example, if the then branch evaluates to an Int, the else branch must also evaluate to an Int.

Conditionals can be used anywhere an expression is expected, and can be nested to handle more complex logic.

// Avoid division by zero

def safe_ratio(numerator: Float, denominator: Float): Float =

if denominator != 0.0 then

numerator / denominator

else

0.0 // Return a default value

// Nested conditional

def describe_temp(temp: Float): String =

if temp > 30.0

then "hot"

else if temp < 10.0

then "cold"

else

"moderate"

Note that if _ then _ else _ is pointwise, meaning that the condition applies to all points in time, independently.

Case Expressions

If all the branches of a conditional expressions are Bool, you can use a cases expression.

cases {

temp > 30.0 -> eventually temp < 20.0;

temp < 10.0 -> eventually temp > 20.0;

10.0 <= temp <= 30.0 -> true;

}

This is interpreted as (temp > 30.0) => (eventually temp < 20.0) && (temp < 10.0 => eventually temp > 20.0) && (10.0 <= temp <= 30.0 => true). Thus, the case 10.0 <= temp <= 30.0 -> true can be omitted. However, we recommend using exhaustive and disjoint conditions for clarity.

Records

Records are composite data types that group together named values, called fields. They are essential for modeling structured data within your specifications.

The Lilo language supports anonymous, structurally typed, extensible records.

Construction and Type

You can construct a record value by providing a comma-separated list of field = value pairs enclosed in curly braces. The type of the record is inferred from the field names and the types of their corresponding values.

For example, the following expression creates a record with two fields: foo of type Int and bar of type String.

{ foo = 42, bar = "hello" }

The resulting value has the structural type { foo: Int, bar: String }. The order of fields in a constructor does not matter.

You can also declare a named record type using a type declaration, which is highly recommended for clarity and reuse.

/// Represents a point in a 2D coordinate system.

type Point = { x: Float, y: Float }

// Construct a value of type Point

def origin: Point = { x = 0.0, y = 0.0 }

Field punning

When you already have a name in scope that should be copied into a record, you can pun the field by omitting the explicit assignment. A pun such as { foo } is shorthand for { foo = foo }.

def foo: Int = 42

def bar: String = "hello"

def record_with_puns = { foo, bar }

Punning works anywhere record fields are listed, including in record literals and updates. Each pun expands to a regular field = value pair during typechecking.

Path field construction

Nested records can be created or extended in one step by assigning to a dotted path. Each segment before the final field refers to an enclosing record, and the compiler will merge the pieces together.

type Engine = { status: { throttle: Int, fault: Bool } }

def default_engine: Engine =

{ status.throttle = 0, status.fault = false }

The order of path assignments does not matter; the paths are merged into the final record. A dotted path cannot be combined with punning; write { status.throttle = throttle } instead of { status.throttle } when you need the path form.

Record updates with with

Use { base with fields } to copy an existing record and override specific fields. Updates respect the same syntax rules as record construction: you can mix regular assignments, puns, and dotted paths.

type Engine = { status: { throttle: Int, fault: Bool } }

def base: Engine =

{ status.throttle = 0, status.fault = false }

def warmed_up: Engine =

{ base with status.throttle = 70 }

def acknowledged: Engine =

{ warmed_up with status.fault = false }

All updated fields must already exist in the base record. Path updates let you rewrite deeply nested pieces without rebuilding the entire structure.

Projection

To access the value of a field within a record, you use the dot (.) syntax. If p is a record that has a field named x, then p.x is the expression that accesses this value.

type Point = { x: Float, y: Float }

def is_on_x_axis(p: Point): Bool =

p.y == 0.0

Records can be nested, and projection can be chained.

type Point = { x: Float, y: Float }

type Circle = { center: Point, radius: Float }

def is_unit_circle_at_origin(c: Circle): Bool =

c.center.x == 0.0 && c.center.y == 0.0 && c.radius == 1.0

Local Bindings

Local bindings allow you to assign a name to an expression, which can then be used in a subsequent expression. This is accomplished using the let keyword and is invaluable for improving the clarity, structure, and efficiency of your specifications.

A local binding takes the form let name = expression1; expression2. This binds the result of expression1 to name. The binding name is only visible within expression2, which is the scope of the binding.

The primary purposes of let bindings are:

- Readability: Breaking down a complex expression into smaller, named parts makes the logic easier to follow.

- Re-use: If a sub-expression is used multiple times, binding it to a name avoids repetition and potential re-computation.

Consider the following formula for calculating the area of a triangle’s circumcircle from its side lengths a, b, and c:

def circumcircle(a: Float, b: Float, c: Float): Float =

(a * b * c) / sqrt((a + b +c) * (b + c - a) * (c + a - b) * (a + b - c))

Using let bindings makes the logic much clearer:

def circumcircle(a: Float, b: Float, c: Float): Float =

let pi = 3.14;

let s = (a + b + c) / 2.0;

let area = sqrt(s * (s - a) * (s - b) * (s - c));

let circumradius = (a * b * c) / (4.0 * area);

circumradius * circumradius * pi

The type of the bound variable (s, area, circumradius) is automatically inferred from the expression it is assigned. You can also chain multiple let bindings to build up a computation step-by-step.

Systems

Systems

Ultimately Lilo is used to specify systems. A system groups together declarations for the temporal input signals, the (non-temporal) parameters and the specifications. A system also includes auxiliary definitions.

A system file should start with a system declaration, e.g.:

system Engine

The name of the system should match the file name.

Type declarations

A new type is declared with the type keyword. To define a new record type Point:

type Point = { x: Float, y: Float }

We can then use Point as a type anywhere else in the file.

Signals

The time varying values of the system are called signals. They are declared with the signal keyword. E.g.:

signal x: Float

signal y: Float

signal speed: Float

signal rain_sensor: Bool

signal wipers_on: Bool

The definitions and specifications of a system can freely refer to the system’s signals.

A signal can be of any type that does not contain function types, i.e. a combination of primitive types and records.

System Parameters

Variables of a system which are constant over time are called system parameters. They are declared with the param keyword. E.g.:

param temp_threshold: Float

param max_errors: Int

The definitions and specifications of a system can freely refer to the system’s parameters. Note that system parameters must be provided upfront before monitoring can begin. For exemplification, system parameters are optional. That is, they can be provided, in which case the example must conform to them, or otherwise the exemplification process will try to find values that work.

Definitions

A definition is declared with the def keyword:

def foo: Int = 42

A definition can depend on parameters:

def foo(x: Float) = x + 42

One can also specify the return type of a definition:

def foo(x: Float): Float = x + 42

The type annotations on parameters and the return type are both optional, if they are not provided they are inferred. It is recommended to always specify these types as a form of documentation.

The parameters of a definition can also be be record types, for instance:

type S = { x: Float, y: Float }

def foo(s: S) = eventually [0,1] s.x > s.y

Definitions can be used in other definitions, e.g.:

type S = { x: Float, y: Float }

def more_x_than_y(s: S) = s.x > s.y

def foo(s: S) = eventually [0,1] more_x_than_y(s)

Definitions can be specified in any order, as long as this doesn’t create any circular dependencies.

Definitions can freely use any of the signals of the system, without having to declare them as parameters.

Specifications

A spec says something that should be true of the system. They can use all the signals and defs of the system. They are declared using the spec keyword. They are much like defs except:

- The return type is always

Bool(and doesn’t need to be specified) - They cannot have parameters.

Example:

signal speed: Float

def above_min = 0 <= speed

def below_max = speed <= 100

spec valid_speed =

always (above_min && below_max)

Assumptions

An assumption declares a property that is taken as given when analysing the system. Syntactically it is like a spec — no parameters, return type is always Bool — but it is treated differently by the tooling: assumptions are automatically included as constraints during exemplification and satisfiability checking, rather than being something to verify.

signal temperature: Float

signal heater_on: Bool

assumption physics = always (heater_on => next temperature >= temperature)

spec eventually_warm = eventually (temperature > 30.0)

In this example, physics must hold for any example traces SpecForge generates, or when checking satisfiability for specs. See Exemplification and Satisfiability for more details on how assumptions interact with analysis.

Modules

Modules

Lilo language supports modules. A module starts with a module declaration, and contains definitions (much like a system):

module Util

def add(x: Float, y: Float) = x + y

pub def calc(x: Float) = add(x, x)

The name of the module must match the file name. For example, a module declared as module Util must be defined in a file named Util.lilo.

A module can only contain defs and types.

Those definitions which should be accessible from other modules should be parked as pub, which means “public”.

To use a module, one needs to import it, e.g. import Util. The public definitions from Util are then available to be used, with qualified names, e.g.:

import Util

def foo(x: Float) = Util::calc(x) + 42

One can import a module qualified with an alias, for example:

import Util as U

def foo(x: Float) = U::calc(x) + 42

To use symbols without a qualifier, use the use keyword:

import Util use { calc }

def foo(x: Float) = calc(x) + 42

To import units from another module, prefix each unit with the unit keyword in the use list:

import Units use { unit m, unit s }

Components

Components are a way of organizing Lilo Systems in hierarchical building blocks.

Consider the following Vehicle system, which is organized in the following way:

- A

Vehiclehas aPowerUnitand aBattery. - The battery consists of two

BatteryCells. - Each of the systems

PowerUnit,Battery,BatteryCellandVehiclehave their own signals, parameters and specifications.

The systems BatteryCell and PowerUnit do not have any components, so they can be declared in a straightforward way:

// BatteryCell.lilo

system BatteryCell

pub signal voltage: Float

pub signal temperature: Float

pub param nominal_voltage: Float

pub def over_voltage: Bool = voltage > nominal_voltage * 1.15

spec voltage_range = voltage >= 2.5 && voltage <= 4.5

// PowerUnit.lilo

system PowerUnit

pub signal temperature: Float

pub signal output: Float

pub param max_output: Float

pub def is_overheating: Bool = temperature > 85.0

spec output_bounded = output <= max_output

Here, the use of the pub keyword indicates that these signals, params and definitions will be accessible to other systems which instantiate these systems as components. Specifications declared with spec are always public.

Instantiating Components

The Battery system uses BatteryCell components, which can be declared using the component keyword:

// Battery.lilo

system Battery

pub signal level: Float

pub signal voltage: Float

pub param capacity: Float

component cell1: BatteryCell

// ...

Lifted vs Mapped Signals and Params

When a system is instantiated as a component in another system, its params and signals are lifted to the parent system. Thus, in addition to level, voltage and capacity, the schema for Battery involves cell1::voltage, cell1::temperature and cell1::nominal_voltage.

Some signals or params of a component may be mapped, meaning that they are not a part of the input schema, but their values are determined by other signals or params (of the parent system). For example, consider the following example where the schema of Battery still exposes cell1::voltage, cell1::temperature and cell1::nominal_voltage, as well as cell2::temperature, but not cell2::nominal_voltage or cell2::voltage. The value of cell2::nominal_voltage is fixed to 3.7, and the value of cell2::voltage is derived from the Battery’s voltage signal.

// Unmapped component - all signals and params will be lifted

component cell1: BatteryCell

// Partially mapped component - voltage is mapped, temperature is lifted

component cell2: BatteryCell {

param nominal_voltage = 3.7

signal voltage = voltage / 2.0 // Derived from battery voltage

}

Accessing Component Signals and Params

Lilo expressions in a system can refer to signals and params of their components using the :: operator to access the component’s signals and params.

// Use cell component signals

pub def any_cell_over_voltage: Bool = cell1::over_voltage || cell2::over_voltage

spec level_valid = level >= 0.0 && level <= 100.0

// Reference cell component specs

spec cells_safe = cell1::voltage_range && cell2::voltage_range

A system can refer to signals and params of components of its components. For example, consider the Vehicle system which has both a Battery and a PowerUnit component. One can write specifications in the Vehicle system in the following manner.

// Vehicle.lilo

// ...

// Two-level signal references

def low_battery: Bool = battery::level < 20.0

def power_hot: Bool = power::temperature > 80.0

def bat_cell1_voltage: Float = battery::cell1::voltage

def at_meihan: Bool = 34.63 < gps.lat < 34.65 && 135.99 < gps.lon < 136.01

// Three-level signal references (Vehicle -> Battery -> BatteryCell)

def cell1_overheated: Bool = battery::cell1::temperature > 65.0

def cell2_voltage_ok: Bool = battery::cell2::voltage > 3.0

// Two-level def references

def battery_or_power_issue: Bool = battery::is_low || power::is_overheating

// Three-level def references (Vehicle -> Battery -> BatteryCell)

def any_cell_overvoltage: Bool = battery::cell1::over_voltage || battery::cell2::over_voltage

// Combined two-level and three-level

def critical_state: Bool = battery::is_low && battery::any_cell_over_voltage

// ...

System Schema

The schema of a system refers to the set of signals and params that constitutes it input. When monitoring, the user provides values for elements of the schema. The schema corresponding to the Vehicle system is shown in the diagram below.

The SpecForge CLI schema command can be used to view the schema of a system. Notice that the mapped signals and params do not appear in the schema, since their values are derived from different signals or params.

$ schema --project /Users/agnishom/code/reqeng/api/examples/projects/vehicle -s Vehicle flat

Signals:

ambient_temp Float

battery::cell1::temperature Float

battery::cell1::voltage Float

battery::cell2::temperature Float

battery::level Float

battery::voltage Float

gps_lat Float

gps_lon Float

power::output Float

speed Float

Params:

battery::capacity Float

battery::cell1::nominal_voltage Float

temp_threshold Float (default: 75.0)

Refer to the documentation on Data Files for details on the format for providing values for the schema when monitoring.

Static Analysis

Static Analysis

System code goes though some code quality checks.

Consistency Checking

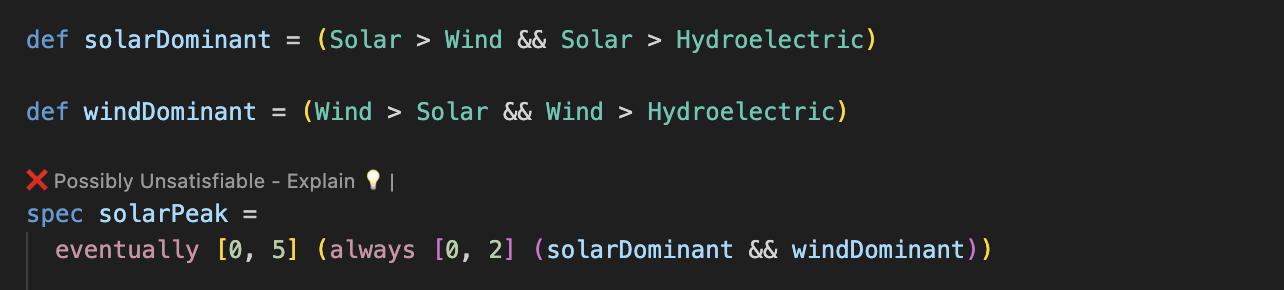

Specs are checked for consistency. A warning is produced if specs may be unsatisfiable:

signal x: Float

spec main = always (x > 0 && x < 0)

This means that the specification is problematic, because it is impossible that any system satisfies this specification.

Inconsistencies between specs are also reported to the user:

signal x: Float

spec always_positive = always (x > 0)

spec always_negative = always (x < 0)

In this case each of the specs are satisfiable on their own, but taken together they cannot be satisfied by any system.

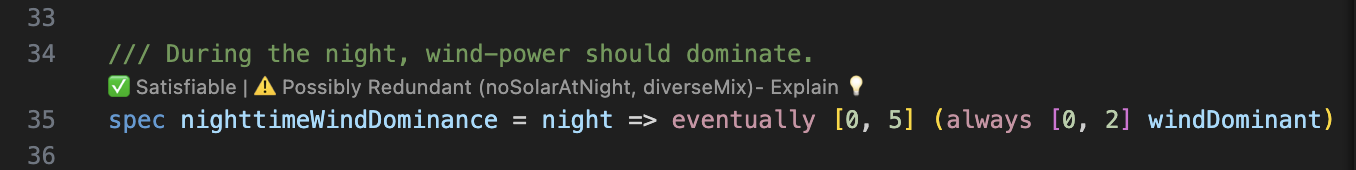

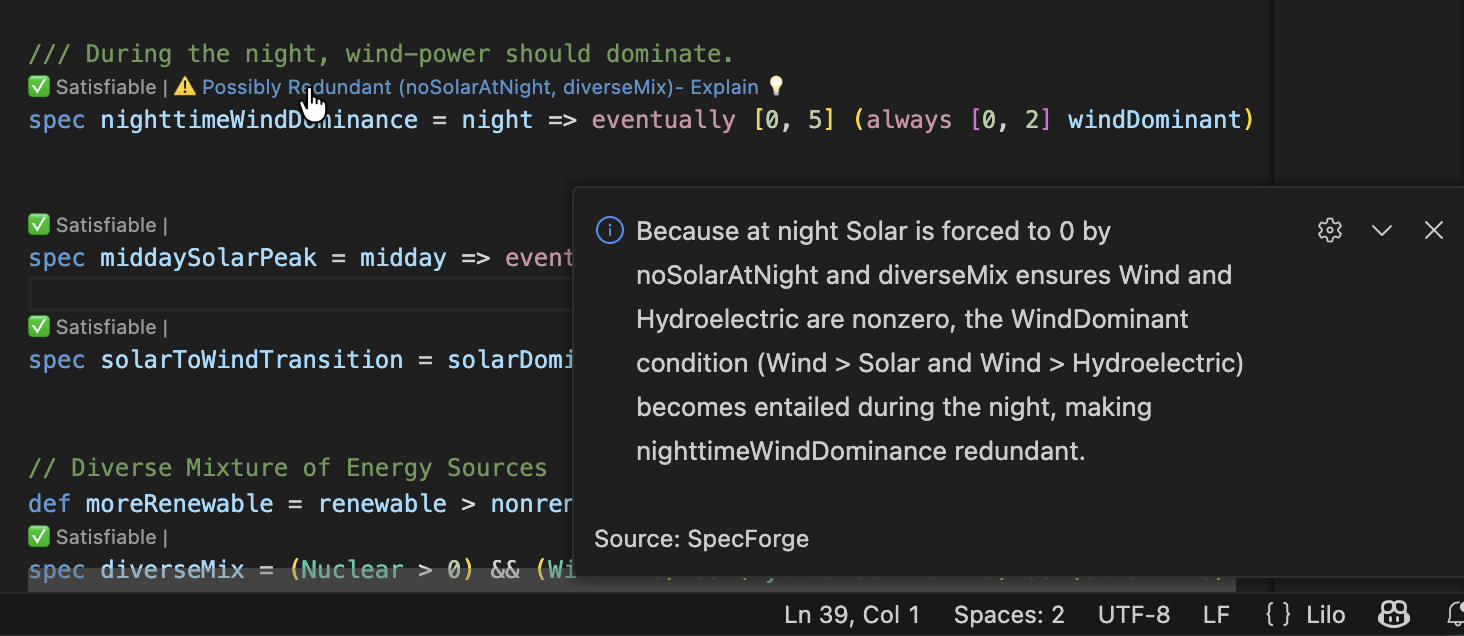

Redundancy Checking

If one spec is redundant, because implied by other specs of the system, this is also detected:

signal x: Float

spec positive_becomes_negative = always (x > 0 => eventually x < 0)

spec sometimes_positive = eventually x > 0

spec sometimes_negative = eventually x < 0

In this case we warn the user that the spec sometimes_negative is redundant, because this property is already implied by the combination of positive_becomes_negative and sometimes_positive. Indeed sometimes_positive implies that there is some point in time where x > 0, and using positive_becomes_negative we conclude that therefore there must be some point in time after than when x < 0.

Guard Analysis for Case Expressions

If a spec is a case expression, we check the guards in the case expression for satisfiability, exhaustiveness, and disjointness.

spec example = cases {

guard1 -> consequence1;

guard2 -> consequence2;

...

}

- Satisfiability: Whether each guard is satisfiable on its own.

- Exhaustiveness: Whether the guards taken together cover all possible cases.

- Disjointness: Whether the guards are mutually exclusive.

Additional Features

Attributes

In addition to docstrings (which begin with ///), Lilo definitions, specs, params and signals can be annotated with attributes. They must immediately precede the item they annotate.

The attributes are used to convey metadata about items they annotate which is used by the tooling (notably the VSCode extension).

#[key = "value", fn(arg), flag]

spec foo = true

- Suppressing unused variable warnings:

#[disable(unused)]- Using this attribute on a definition, param or signal will suppress warnings about it being unused.

- Specs or public definitions are always considered used.

- Timeout for Static Analyses

- To override the default timeout for static analyses, a

timeoutcan be specified in seconds. - They can be specified individually

#[timeout(satisfiability = 20, redundancy = 30)] - Or together

#[timeout(10)]which sets both to 10 seconds.

- To override the default timeout for static analyses, a

- Disabling static analyses

- Use

#[disable(satisfiability)]or#[disable(redundancy)]to disable specific static analyses on a definition.

- Use

- Soft assumptions:

#[rigidity = "soft"]- Applied to an

assumption, marks it as a soft constraint that the solver tries to satisfy but may relax if it conflicts with hard constraints. See Rigidity Levels for details.

- Applied to an

Labels, Aliases, and Custom Fields

Specifications can be annotated with labels, aliases, and custom fields, using attributes.

A label is a string attached to a specification. The VSCode extension’s spec sidebar groups specifications by label. This is useful for focusing on a subset of specifications, such as the safety-related ones.

#[label("safety", "critical")]

spec brake_must_work = always (brake_pedal => eventually (deceleration > 0))

An alias is an alternative name in a particular language. The spec sidebar shows the alias alongside the name used in the source file.

#[alias(en = "Brake Must Work", ja = "ブレーキは動作しなければならない")]

spec brake_must_work = always (brake_pedal => eventually (deceleration > 0))

A specification can have multiple #[label] or #[alias] attributes. All of them are applied.

A label can be associated with a color (represented via a hex code or any standard HTML5 color name) in the lilo.toml config file. To do so, simply add a section [labels.colors] like the following:

[labels.colors]

production = "blue"

consumption = "magenta"

renewable = "0x00ff00"

'mitigation strategy' = "yellow"

Custom fields attach extra scalar metadata to a specification. They are useful for project-specific categorization such as review status, owner, priority, etc.

#[field(priority = 1, reviewed = true, owner = "ops")]

spec brake_must_work = always (brake_pedal => eventually (deceleration > 0))

Custom field values must be scalar values (strings, numbers, booleans). For a single specification, if the same custom field is provided more than once, the first value is used and a warning is emitted.

The VSCode extension can use custom fields in the Spec Status Pane filter. For example:

reviewed:trueowner:"ops"priority:>=2

See VSCode Extension for the full query syntax.

Default Values for Parameters

When specifying a system, parameters can optionally be given a default value. The intent of such a default value is to indicate that the parameter is expected to be instantiated with the default value in a typical use case.

#[default = 25.0]

param temperature: Float

Default values should not be used to declare constants. For them, use a def instead.

def pi: Float = 3.14159

- When monitoring, parameters with default values can be omitted from the input. If omitted, the default value is used. They can also be explicitly provided, in which case the provided value is used.

- When exporting a formula, parameters with a default value will be substituted with the default value before the export.

- When exemplifying, the exemplifier will require the solver to fix the parameter to the default value.

When running an analysis such as export or exemplification, one can provide the JSON null value for a field in the config. This has the effect of requesting SpecForge to ignore the default value for the parameter.

system main

signal p: Int

#[default = 1]

param bound: Int

spec foo = p > 1 && p < bound

- Exemplification

- With

params = {}: Unsatisfiable (the default value ofboundis used) - With

params = { "bound": null }: Satisfiable (the solver is free to choose a value ofboundthat satisfies the constraints)

- With

- Export

- With

params = {}, result:p > 1 && p < 1 - With

params = { "bound": 100 }, result:p > 1 && p < 100 - With

params = { "bound": null }, result:p > 1 && p < bound

- With

Note that the JSON null value cannot be used as a default value as a part of the Lilo program.

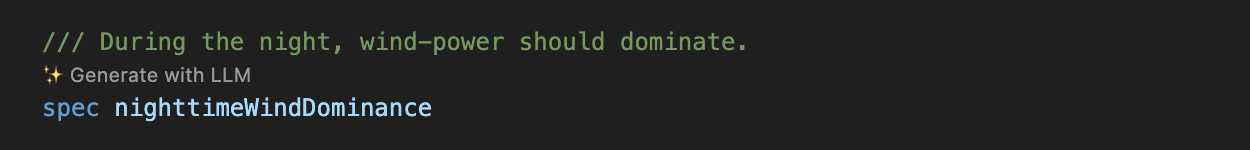

Spec Stubs

The user may create a spec stub, a spec without a body. Such a stub may still have a docstring and attributes. This can be used as a placeholder, and is interpreted as true by the Lilo tooling.

The VSCode extension will display a codelens to generate an implementation for the stub based on the docstring using an LLM (if configured).

/// The system should always eventually recover from errors.

spec error_recovery

Stubs can also be used for assumptions which have not yet been formalized.

/// height is always non-negative

assumption height_non_negative

Conventions

Some languages require that certain classes of names be capitalized or not, to distinguish them. Lilo is flexible, so that it can match the naming conventions of the system it is being used to specify. That said, here are the conventions that we use in the examples:

- Module and systems are lowercase snake_case. So e.g.

climate_controlrather thanClimateControl. - Important: The name of a module or system must match the file name it is defined in. For example,

module climate_controlorsystem climate_controlmust be defined in a file namedclimate_control.lilo. - Names of

signals,params,defs,specs, arguments and record field names are lowercase and snake_case. So e.g.signal wind_speedrather thansignal WindSpeedorsignal windSpeed. - Types, including user defined ones, should be capitalized and CamelCase. E.g.

type Plane = { wind_speed: Float, ground_speed: Float }

Semantics of Temporal Operators

Lilo adopts a semantics for temporal logic that is slightly non-standard, in the sense that it takes into account both a discrete and a continuous notion of time.

Let T be a type. A sample of type T is a pair {time : Real, value : T}. A signal of type T is a finite sequence of at-least two samples of type T such that the time values are strictly increasing. We write Signal[T] to be the set of all signals of type T. We do not require the first sample of a signal to be at time 0. Given a signal σ, we denote the elements of σ as σ[0], σ[1], …, σ[n-1] where n is the number of samples of σ. Note that we distinguish between the number of samples of σ and the duration of σ (which is σ[n-1].time - σ[0].time).

The support of a signal σ, denoted support(σ), is the set of time values associated with the samples of σ, i.e., support(σ) = {σ[i].time | 0 ≤ i < n}. We write σ[time = t] to denote the value of σ at time t, i.e, σ[time = t] = σ[i].value where i is such that σ[i].time = t. Note that σ[time = t] is only defined for t ∈ support(σ).

Each Lilo formula φ is judged to be of some type T (written φ : T), by the Lilo type system. The semantics of a Lilo formula of type T is understood as a function of type Signal[S] → Signal[T], where S is the type of the input signals described by the system. This function is defined to be support-preserving, i.e, the output signal is defined on exactly the same time values as the input signal. For a formula φ of type T, we write 〚φ〛 to denote this function.

Atemporal operators include the standard Boolean operators, arithmetic operators, comparison operators, conditionals and so on. A formula is said to a pointwise formula if for any fixed time value t, the value of the output signal 〚φ〛(σ)[time = t] depends only on the value of the input signal σ[time = t]. A lilo expression is said to be atemporal if its intepretation does not depend on the input signals. Thus, a pointwise formula is built from input signals and atemporal operators, and an atemporal expression is built from params, constants and atemporal operators.

An interval of real numbers is written as a pair [a, b] where a: Real and b: Real | infinity with a ≤ b. If b is infinity, we say that the interval is unbounded, and otherwise we say that the interval is bounded. For a time point t and an interval I, we write t + I or t - I to denote {t + x | x ∈ I} or {t - x | x ∈ I} respectively. In our case, since each of the temporal operators preserves support, we will write t + I and t - I as a shorthand for (t + I) ∩ support(σ) and (t - I) ∩ support(σ).

Temporal operators in Lilo, with some exceptions, are annotated with an interval. Given φ : Bool, we define the following temporal operators.

- Always:

〚always[I] φ〛(σ)[time = t] = trueif and only if for allt' ∈ (t + I),〚φ〛(σ)[time = t'] = true. - Eventually:

〚eventually[I] φ〛(σ)[time = t] = trueif and only if there existst' ∈ (t + I)such that〚φ〛(σ)[time = t'] = true. - Historically:

〚historically[I] φ〛(σ)[time = t] = trueif and only if for allt' ∈ (t - I),〚φ〛(σ)[time = t'] = true. - Past:

〚past[I] φ〛(σ)[time = t] = trueif and only if there existst' ∈ (t - I)such that〚φ〛(σ)[time = t'] = true. - Until:

〚φ until[I] ψ〛(σ)[time = t] = trueif and only if there existst' ∈ (t + I)such that〚ψ〛(σ)[time = t'] = trueand for allt'' ∈ support(σ)satisfyingt ≤ t'' < t',〚φ〛(σ)[time = t''] = true. - Since:

〚φ since[I] ψ〛(σ)[time = t] = trueif and only if there existst' ∈ (t - I)such that〚ψ〛(σ)[time = t'] = trueand for allt'' ∈ support(σ)satisfyingt' < t'' ≤ t,〚φ〛(σ)[time = t''] = true.

Note that the always and eventually operators are logical duals of each other. The logical dual of until is releases, i.e, φ releases[I] ψ is defined as !(!φ until[I] !ψ). The operators since, historically and past are the past-time counterparts of until, always and eventually respectively.

The operators will_change and did_change are defined for any well-typed φ : T for which equality is defined (which is currently all non-function types) as follows.

- Will Change:

〚will_change[I] φ〛(σ)[time = t] = trueif and only if there exists two pointst', t'' ∈ (t + I)such that〚φ〛(σ)[time = t'] ≠ 〚φ〛(σ)[time = t'']. - Did Change:

〚did_change[I] φ〛(σ)[time = t] = trueif and only if there exists two pointst', t'' ∈ (t - I)such that〚φ〛(σ)[time = t'] ≠ 〚φ〛(σ)[time = t''].

The following operators cannot be annotated with an interval. And importantly, they are defined on the discrete time-semantics, i.e, they depend on the discrete index associated with the samples rather than the associated time. If the base formula φ is of type T, and v is an atemporal value of type T, then so are the formulas next φ, previous φ, next_with v φ and previous_with v φ. We write n to denote the number of samples of the input signal σ.

- Next:

〚next φ〛(σ)[i] = 〚φ〛(σ)[i + 1]for0 ≤ i < n - 1and〚next φ〛(σ)[n - 1] = 〚φ〛(σ)[n - 1]. - Previous:

〚previous φ〛(σ)[i] = 〚φ〛(σ)[i - 1]for0 < i < nand〚previous φ〛(σ)[0] = 〚φ〛(σ)[0]. - Next With:

〚next_with v φ〛(σ)[i] = 〚φ〛(σ)[i + 1]for0 ≤ i < n - 1and〚next_with v φ〛(σ)[n - 1] = v. - Previous With:

〚previous_with v φ〛(σ)[i] = 〚φ〛(σ)[i - 1]for0 < i < nand〚previous_with v φ〛(σ)[0] = v.

The following sliding window operators are defined for any φ whose value is a numeric type. Note also that the associated interval must be of the form [0, b] where b is possibly infinity.

- Future Maximum:

〚max_future [0, a] φ〛(σ)[time = t] = max{〚φ〛(σ)[time = t'] | t' ∈ support(σ), t ≤ t' ≤ t + a}. - Past Maximum:

〚max_past [0, a] φ〛(σ)[time = t] = max{〚φ〛(σ)[time = t'] | t' ∈ support(σ), t - a ≤ t' ≤ t}. - Future Minimum:

〚min_future [0, a] φ〛(σ)[time = t] = min{〚φ〛(σ)[time = t'] | t' ∈ support(σ), t ≤ t' ≤ t + a}. - Past Minimum:

〚min_past [0, a] φ〛(σ)[time = t] = min{〚φ〛(σ)[time = t'] | t' ∈ support(σ), t - a ≤ t' ≤ t}.

Exemplification and Satisfiability

Exemplification is the process of generating example traces that satisfy a specification.

Satisfiability is a closely related concept: a spec is satisfiable if there exists at least one trace that makes it true. SpecForge’s static analysis automatically checks whether your specs are satisfiable and warns you if they are not.

Both exemplification and satisfiability checking are influenced by assumptions, which are additional constraints that the solver takes into account.

Assumptions

An assumption is a property that is taken for granted when analysing a specification. Instead of being something you want to verify, it represents background knowledge about the system: physical laws, environmental constraints, or operating conditions that are expected to hold.

The assumption Keyword

In Lilo, you can declare an assumption using the assumption keyword:

signal temperature: Float

signal heater_on: Bool

assumption physics = always (heater_on => next temperature >= temperature)

spec eventually_warm = eventually (temperature > 30.0)

An assumption is syntactically like a spec (it has no parameters and its return type is always Bool) but it is treated differently by the tooling:

- Exemplification: When generating example traces for any spec in the same system, all assumptions are automatically included as constraints. The solver must produce traces that satisfy both the spec and every assumption.